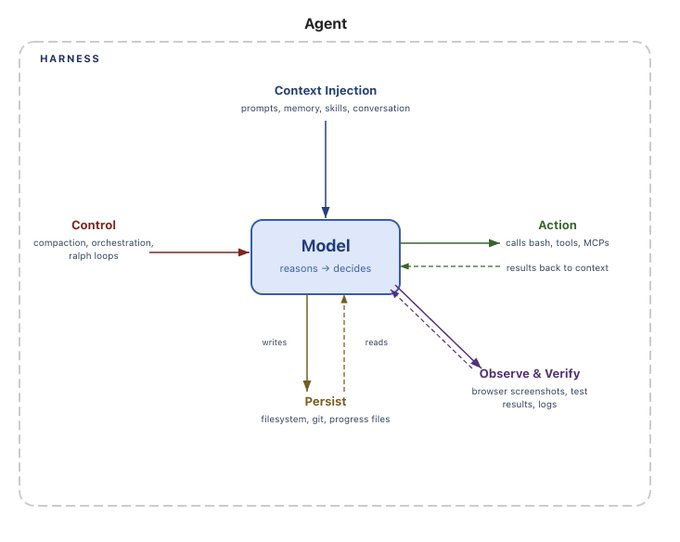

Agent harnesses are becoming the dominant way to build agents, and they are not going anywhere. These harnesses are intimately tied to agent memory. If you used a closed harness - especially if it’s behind a proprietary API - you are choosing to yield control of your agent’s

This year, we're doing it again. Interrupt 2026 is May 13–14 at The Midway in San Francisco, and the lineup, the format, and the scale have all leveled up.

Today we’re launching Deep Agents deploy in beta. Deep Agents deploy is the fastest way to deploy a model agnostic, open source agent harness in a production ready way. Deep Agents deploy is built for an open world. It’s built on Deep Agents - an open source, model

AI agents work best when they reflect the knowledge and judgment your team has built over time. Some of that is institutional knowledge that’s already documented and easy for an agent to use as-is. But most great organizations also rely on tacit knowledge that lives inside their employees’ minds.

By Vivek Trivedy, Product Manager @ LangChain 💡TL;DR: We can build better agents by building better harnesses. But to autonomously build a “better” harness, we need a strong learning signal to “hill-climb” on. We share how we use evals as that signal, plus design decisions that help our agent generalize

TL;DR: We’ve released new minor versions of deepagents & deepagentsjs, featuring async (non-blocking) subagents, expanded multi-modal filesystem support, and more. See the changelog for details. Async subagents Deep Agents can now delegate work to remote agents that run in the background. As opposed to the existing inline subagents, which

Arcade is the MCP runtime for production agents, delivering secure agent authorization, reliable tools, and governance. This integration gives your agents access to Arcade’s collection of 7,500+ agent-optimized tools through a single secure gateway.

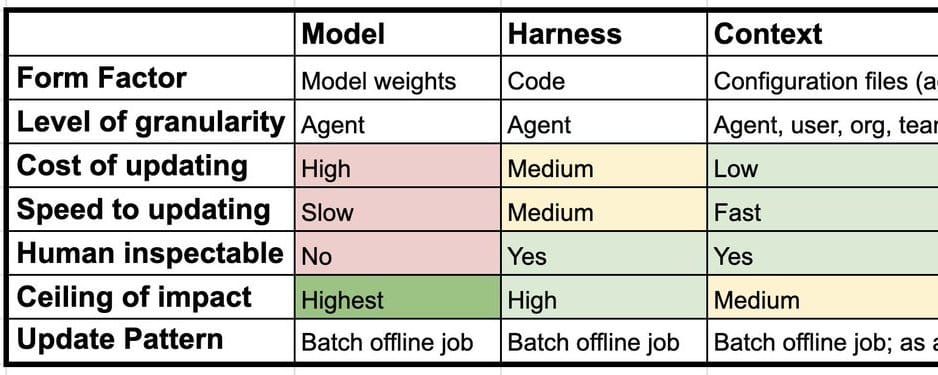

Most discussions of continual learning in AI focus on one thing: updating model weights. But for AI agents, learning can happen at three distinct layers: the model, the harness, and the context. Understanding the difference changes how you think about building systems that improve over time. The three main layers

💡TL;DR: Open models like GLM-5 and MiniMax M2.7 now match closed frontier models on core agent tasks — file operations, tool use, and instruction following — at a fraction of the cost and latency. Here's what our evals show and how to start using them in Deep Agents. Over the

It feels like spring has sprung here, and so has a new NVIDIA integration, ticket sales for Interrupt 2026, and announcing LangSmith Fleet (formerly Agent Builder).

Build production AI agents on MongoDB Atlas — with vector search, persistent memory, natural-language querying, and end-to-end observability built in.

A practical checklist for agent evaluation: error analysis, dataset construction, grader design, offline & online evals, and production readiness.

Discover how Kensho, S&P Global’s AI innovation engine, leveraged LangGraph to create its Grounding framework–a unified agentic access layer solving fragmented financial data retrieval at enterprise scale.

💡TLDR: The best agent evals directly measure an agent behavior we care about. Here's how we source data, create metrics, and run well-scoped, targeted experiments over time to make agents more accurate and reliable. Evals shape agent behavior We’ve been curating evaluations to measure and improve Deep Agents. Deep

Agent harnesses are what help build an agent, they connect an LLM to its environment and let it do things. When you’re building an agent, it’s likely you’ll want build an application specific agent harness. “Agent Middleware” empowers you to build on top of LangChain and Deep

Moda uses a multi-agent system built on Deep Agents and traced through LangSmith to let non-designers create and iterate on professional-grade visuals.

If you're attending Google Cloud Next 2026 in Las Vegas this year and working on agent development, here's what we have planned. Visit Us at Booth #5006 We'll be at Booth #5006 in the Expo Hall at the Mandalay Bay Convention Center, April 22-24. Our engineering team will be running

Agent Builder is now Fleet: a central place for all of your teams to build, use, and manage agents across the enterprise.

Debugging agents is different from debugging anything else you've built. Traces run hundreds of steps deep, prompts span thousands of lines, and when something goes wrong, the context that caused it is buried somewhere in the middle. We built Polly to be the AI assistant that can read a 300-step

Spin up a sandbox in a single line of code with the LangSmith SDK. Now in Private Preview.

Comprehensive agent engineering platform combined with NVIDIA AI enables enterprises to build, deploy, and monitor production-grade AI agents at scale Press Release SAN FRANCISCO, March 16, 2026 /PRNewswire/ — LangChain, the agent engineering company behind LangSmith and open-source frameworks that have surpassed 1 billion downloads, today announced a comprehensive integration with

We’re excited to introduce the deploy cli, a new set of commands within the langgraph-cli package that makes it simple to deploy and manage agents directly from the command line. The first command in this new set, langgraph deploy, lets you deploy an agent to LangSmith Deployment in a