Last updated: 2026/04/26 13:01

Start your second innings with ChatGPT Credits Director: Abhinav Pratiman DOP: Tassaduq Hussain Production House: Early Man Film Creative agency: Hue & Why

“I thought building this internal tool was going to take me days…but with Codex and GPT-5.5, I was able to do it in under an hour.” Denis from Perplexity saw GPT-5.5 cut token usage by 56% while running the same agentic workflows faster and more efficiently. Build with GPT-5.5: https://openai.com/index/introducing-gpt-5-5/

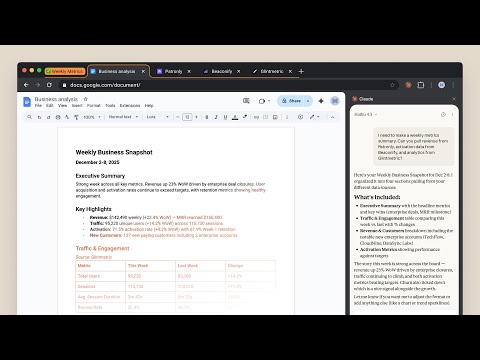

Watch a guided walkthrough of an agent that pulls Friday metrics, creates charts, drafts the narrative, and delivers a ready-to-share business report.

Introducing workspace agents in ChatGPT—Codex-powered agents for teams. Watch a guided walkthrough of an agent that reviews software requests, checks policy, routes approvals, and opens IT tickets with clear next steps.

Introducing workspace agents in ChatGPT—Codex-powered agents for teams. Watch a guided walkthrough of an agent that screens vendors for sanctions, financial, and reputational risk, then turns the findings into a clear report.

While Shaunak Joshi grabbed a snack, GPT-5.5 in Codex refactored his entire code base. GPT-5.5 is our smartest and most intuitive model and NVIDIA AI researchers are seeing a 10x speed improvement in running experiments. “The magic moment was just the fact that it had enough intelligence to come back to me with a solution based on this very abstract question” We love to hear it. Build with GPT-5.5 ➡️ https://openai.com/index/introducing-gpt-5-5/

"GPT-5.5’s superpower is that it can just get things done.” It’s so cool to see how masters of the AI universe like NVIDIA’s Dennis Hannusch are leveraging GPT-5.5-Codex for complex engineering tasks. Be like Dennis. Build with GPT-5.5: https://openai.com/index/introducing-gpt-5-5/

Romain Huet chats with Aaron Friel, Member of Technical Staff @ OpenAI about the new GPT-5.5 model. Find out how teams within OpenAI are taking advantage of faster and more autonomous long-running tasks using GPT-5.5.

Find out what Claire Vo, founder of ChatPRD and host of How I AI, thinks about GPT-5.5. Claire talks about squashing bugs in ChatPRD and how GPT-5.5 is enabling new workflows.

Romain Huet chats with Will Koh from Ramp. Will is a Senior Software Engineer who has been working with GPT-5.5. Find out how GPT-5.5 improves Ramp's harness through much more intelligent tool finding and how this will benefit their customers.

Will Koh, Claire Voh, and Aaron Friel share their first impressions of GPT-5.5

Introducing GPT-5.5 A new class of intelligence for real work and powering agents, built to understand complex goals, use tools, check its work, and carry more tasks through to completion. It marks a new way of getting computer work done. Now available in ChatGPT and Codex.

The panel digs into the Cloudflare vs Vercel turf war over Next.js, breaking down what it really means that one engineer vibe coded a full framework rewrite in a week for $1,100 using Claude Code. Then things get spicy: from the Lovable data breach to an early Anthropic model escaping its sandbox, the crew debates whether the wave of AI security incidents is systemic, and what the build vs buy collapse means for developers rolling their own tools in the AI agent era.

Watch a guided walkthrough of an agent that reviews software requests, checks policy, routes approvals, and opens IT tickets with clear next steps.

Watch a guided walkthrough of an agent that pulls Friday metrics, creates charts, drafts the narrative, and delivers a ready-to-share business report.

Watch a guided walkthrough of an agent that screens vendors for sanctions, financial, and reputational risk, then turns the findings into a clear report.

Watch a guided walkthrough of an agent that qualifies inbound leads, drafts personalized follow-ups, and keeps the CRM up to date.

Watch a guided walkthrough of an agent that gathers feedback from Slack, support, and public channels, prioritizes what matters, and turns signals into weekly product action.

Introducing workspace agents in ChatGPT—shared agents that can handle complex tasks and long-running workflows across tools and teams. Agents are built to help with the kind of work that takes time, context, and follow-through: coordinating across tools like Slack and Linear, tracking progress, and moving tasks forward without needing constant supervision. Build an agent once, then share it across teams. Describe the job, and ChatGPT helps turn it into a working agent that can use your team’s best practices. Use agents for tasks like qualifying leads, routing feedback, reviewing requests, pulling reports, or researching vendors. Workspace agents are now available in research preview for ChatGPT Business, Enterprise, Edu, and Teachers plans. https://openai.com/index/introducing-workspace-agents-in-chatgpt/

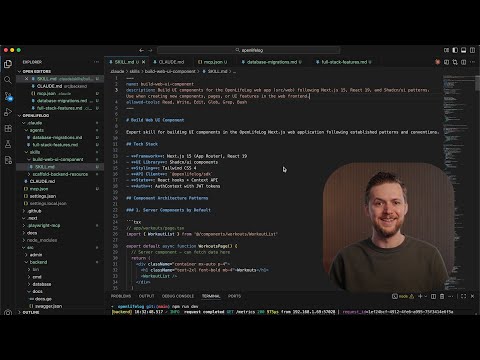

この記事では、AIコーディングの信頼性を向上させるための決定論的ツールについて、Wes BosとScott Tolinskiが議論しています。具体的には、コード品質分析、リント戦略、ヘッドレスブラウザ、タスクワークフローを取り上げ、AIが推測するのではなく、保守可能で予測可能なコードを生成するためのより良いパターンを強制する方法を説明しています。使用されるツールには、fallow、knip、ESLint、StyleLint、Sentryなどが含まれ、これらを活用することでAIのコーディング精度を向上させることが可能です。 • AIコーディングの信頼性を向上させるための決定論的ツールの使用 • コード品質分析やリント戦略の重要性 • ヘッドレスブラウザやタスクワークフローの活用 • AIが推測するのではなく、保守可能なコードを生成するためのパターンの強制 • 使用される具体的なツール(fallow、knip、ESLint、StyleLint、Sentry)

Gabriel Goh, Kenji Hata, Kiwhan Song, Alex Yu, Boyuan Chen, and Nithanth Kudige with host Sam Altman introduce and demo ChatGPT Images 2.

OpenAI researcher Ayaan Haque shows how capable ChatGPT Images 2.0 has become when Thinking is enabled. With the ability to research topics on the web, it can handle open-ended prompts and produce highly complex outputs

OpenAI researcher Jianfeng Wang explains how ChatGPT Images 2.0 can now follow highly detailed instruction in prompts, especially when it comes to spatial layouts and text rendering.

OpenAI researcher Yuguang Yang shows how, with Thinking selected, ChatGPT Images 2.0 can now follow long and complex prompts of over 1,000 words to create detailed, educational infographics. ChatGPT can now also transform uploaded PDFs into complete slides for presentations or polished infographics as PDF outputs.

OpenAI researcher Boyuan Chen demonstrates ChatGPT Images 2.0’s ability to render dense text across multiple languages while keeping stylistic choices intact. While text rendering in Latin-based languages has already been strong, the model has now expanded to handle a much wider range of languages.

OpenAI researcher Dibya Bhattacharjee shows the variety of aspect ratios you can prompt with ChatGPT Images 2.0, plus the jump in maximum resolution – from 1k in to now 2k resolution, or even higher when using our API.

Imagine what you make. Unlock a new form of storytelling with ChatGPT Images 2.0. Every frame was generated with ChatGPT Images.

A new era of image generation. Video made with ChatGPT Images.

GPT‑Rosalind and the Life Sciences Research Plugin for Codex help researchers connect evidence, databases, and scientific tools to plan stronger follow-up experiments. Learn more: https://openai.com/index/introducing-gpt-rosalind/

GPT‑Rosalind in Codex helps scientists move from raw scientific inputs to evidence-backed hypotheses, analysis, and research decisions across discovery workflows. Learn more: https://openai.com/index/introducing-gpt-rosalind/

What does it take to build AI systems that can actually help scientists? Research lead Joy Jiao and product lead Yunyun Wang discuss how OpenAI is developing models for life sciences and what responsible deployment means in a field with real biosecurity stakes. They explore how AI is already improving research workflows and where it could lead in drug discovery and more autonomous labs — including why a future with less pipetting sounds pretty good to most scientists. Chapters 0:39 Introducing the Life Sciences model series 3:47 Joy’s path into life sciences 5:00 Autonomous lab with Ginkgo Bioworks 7:27 Yunyun’s path into life sciences 8:12 OpenAI’s life sciences work 9:48 Biorisk, access, and safeguards 15:43 What models can do in the lab 17:51 Building scientific infrastructure 20:14 Why compute matters for science 24:54 Where are we in 6-12 months? 29:51 Scientific adoption and skepticism 33:17 Advice for students and researchers 40:27 Where are we in 10 years?

Codex can now use apps on your Mac, connect to more of your tools, create images, learn from previous actions, remember how you like to work, and take on ongoing and repeatable tasks.

Void makes Cloudflare deployment invisible with Alexander Lichter

Fix it like a pro with ChatGPT Director : Abhinav Pratiman DOP : Tassaduq Hussain Production House : Early Man Film Creative agency: Hue & Why

We were able to create a JavaScript runtime just in two weeks. Without Codex, it would have taken us easily one year." Syrus Akbary Nieto, founder and CEO of Wasmer has been stress testing Codex, assigning complex, long running tasks. "We are actually moving out of the IDE itself. We're not touching as much the code, we're just guiding it where we want it to go." #shotsvideo #codex #openaicodex Learn more: https://openai.com/codex/

"We were able to create a JavaScript runtime just in two weeks. Without Codex, it would have taken us easily one year." Syrus Akbary Nieto, founder and CEO of Wasmer has been stress testing Codex, assigning complex, long running tasks. 🚀 It's been a game changer. "We are actually moving out of the IDE itself. We're not touching as much the code, we're just guiding it where we want it to go." Learn more: https://openai.com/codex/

第 202 回のテーマは 2026 年 4 月の Monthly Ecosystem です。

Builders Unscripted spotlights the stories behind real projects and the mindset that makes them possible: you can just build things. In this episode, Romain Huet, Head of Developer Experience, chats with Ashe Magalhaes, founder of Hearth AI. Ashe talks about moving fast with Codex, finding beauty in tools, and gives a keen look into her agentic personal operating systems that enable her to feel more present, connected, and intentional. Chapters: 00:00 Intro 00:10 Early agents and Hearth AI 05:43 AI and human connection 08:44 Build in public 12:17 Ashe’s agentic workflow

Upload your design inspiration to ChatGPT and find inspiration for similar items. Make shopping easier than ever with our visually immersive shopping experiences.

When GitLab co-founder Sid Sijbrandij was diagnosed with a rare and aggressive cancer, he approached it like an engineering problem. In this conversation, Sid joins geneticist Jacob Stern to share how they used ChatGPT, advanced diagnostics, and real-time research to better understand his tumor and explore personalized treatment options—building a faster feedback loop between patients, doctors, and scientific literature. About OpenAI Forum: The OpenAI Forum is a global community that brings together leaders across industries to share how AI is being used in the real world—what’s working, what we’re learning, and how we can shape its impact together. Learn more: https://forum.openai.com/

“The really cool thing with Codex is that we get to iterate and ideate on feature requests with the customer in real time.” Ankur Goyal, founder & CEO of Braintrust dropped some builder knowledge in our latest episode of What Codex Unlocks. Learn more: https://openai.com/codex/ #Codex #openaicodex #shorts

"The really cool thing with Codex, is that we get to iterate and ideate on feature requests with the customer in real time.” Ankur Goyal, founder & CEO of Braintrust dropped some builder knowledge in our latest episode of What Codex Unlocks. Learn more: https://openai.com/codex/

AI is advancing faster than most people realize. In this OpenAI Forum conversation, Sam Altman joins Josh Achiam and Adrien Ecoffet to talk about what’s coming next. They discuss the pace of progress toward more capable AI systems, what these tools could unlock—from scientific breakthroughs to new ways of building companies—and the challenges society needs to prepare for. The conversation also looks at who gets access to AI, how to make sure the benefits are widely shared, and what it will take for institutions and individuals to keep up.

Project Glasswing is a new initiative that brings together Amazon Web Services, Anthropic, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks in an effort to secure the world’s most critical software. We formed Project Glasswing because of capabilities we’ve observed in a new frontier model trained by Anthropic that we believe could reshape cybersecurity. Claude Mythos Preview is a general-purpose, unreleased frontier model that reveals a stark fact: AI models have reached a level of coding capability where they can surpass all but the most skilled humans at finding and exploiting software vulnerabilities. Read more: https://anthropic.com/glasswing

OpenAI and LG Uplus engineers collaborated to create a next-generation AICC, “Agentic AICC,” which is positioned to become a new standard for enterprise AI customer service. LG Uplus plans to introduce an agent service featuring ultra-low-latency AI customer support built on the OpenAI Realtime API, along with a Planning Agent that can resolve issues on its own using checklist-based workflows. #LGUplus #OpenAI #AICC #AIContactCenter #AgenticAICC #LLM #AgenticCallBot

AI models sometimes act like they have emotions—why? We studied one of our recent models and found that it draws on emotion concepts learned from text to inhabit its role as Claude, the AI assistant. These representations influence its behavior the way emotions might influence a human. And that has real consequences, affecting how Claude answers chats, writes code, and makes decisions. Read more about this research: https://www.anthropic.com/research/emotion-concepts-function

Gradient Labs is giving every bank customer an AI account manager, with CSAT up to 98% and over 50% resolution on day one. In banking support, even simple issues like a declined payment can trigger multiple teams, handoffs, and delays. Gradient Labs replaces that with a single agent that handles the full workflow. Identity checks, card actions, and follow-ups happen in one continuous conversation. Read their full story here: https://lnkd.in/emSndtkx

"Codex code review is industry gold standard." We checked in with Ramp to hear how the AI Dev X team is leveraging Codex to build an AI-driven on-call helper to take the burden off engineers. " Codex with GPT 5.4 is adept at dealing with complexity in a way that would have required a lot mental effort...but Codex handles it like its nothing" Learn more: https://openai.com/codex/

ChatGPT can help you find the perfect gift based on your budget, preferences, and constraints—and surface products that fit. You can browse visually, upload images as inspiration for similar items, and refine results conversationally until you land on the right option.

Upload your design inspiration to ChatGPT and find inspiration for similar items. Make shopping easier than ever with our visually immersive shopping experiences.

With Codex, Ryan Hendler, a developer at me&u in Melbourne Australia, ships while he sleeps and does work that wasn’t possible 6 months ago. So Ryan, what has Codex unlocked for you? “It unlocks my afternoons. I can spend time with my team discussing product, meeting with customers, being more hands on.” More: https://openai.com/codex/ #shorts #openaicodex #coding #startup #melbourne

With Codex, Ryan Hendler, a developer at me&u in Melbourne Australia, ships while he sleeps and does work that wasn’t possible 6 months ago. So Ryan, what has Codex unlocked for you? “It unlocks my afternoons. I can spend time with my team discussing product, meeting with customers, being more hands on.” More: https://openai.com/codex/

The Amazon AI coding outage reignited a debate the industry can't ignore: is this an AI failure or a process failure, and does that distinction even matter anymore? Paige, Jack, Paul, and Noel dig into vibe coding culture, the engineer retention crisis, and the rise of harness engineering as a discipline in this month's panel. They also tackle autonomous agents running while you sleep, zero-touch engineering, what a senior engineer even means now, and whether open source can survive the agentic era.

The more AI can do, the more we need to ask what it should and shouldn’t do. In this episode, OpenAI researcher Jason Wolfe joins host Andrew Mayne to talk about the Model Spec, the public framework that defines intended model behavior. They discuss how the Model Spec works in practice, including how the chain of command handles conflicts between instructions, and how OpenAI evolves it based on feedback, real-world use, and new model capabilities. More on our approach to the Model Spec: https://openai.com/index/our-approach-to-the-model-spec/ Chapters 00:00 Introduction 01:10 What is the Model Spec? 03:55 How does the Model Spec work in practice? 06:26 Transparency: Where to read the Model Spec & give feedback 07:51 How did the Model Spec originate? 10:02 How does the spec translate into model behavior? 11:26 What is the hierarchy / chain of command? 13:35 Handling edge cases like Santa Claus 17:41 How does the Model Spec evolve over time? 19:59 What happens when models disagree with the spec? 22:05 How do smaller models follow the spec? 23:16 Is chain-of-thought useful for alignment? 24:16 Model Spec vs Anthropic’s Constitution 26:28 What surprised you most? 26:56 How do you define the scope of the spec? 27:44 What is the future of the Model Spec? 31:16 How should developers think about the spec? 34:44 Asimov’s laws vs Model Spec 37:16 Could AI write a Human Spec?

Hey Ryan Nystrom (Notion), what has Codex unlocked for you? “The fact that I can build this feature solo while still supporting my team is crazy.” Ryan built Notion AI Voice Input in one shot, while managing a team. More: https://openai.com/codex/ #shorts #shortvideo #openaicodex #notion

Hey Ryan Nystrom @ Notion, what has Codex unlocked for you? “The fact that I can build this feature solo while still supporting my team is crazy.” Ryan built Notion AI Voice Input in one shot, while managing a team, blindfolded. Maybe not blindfolded, but the rest is true. More: https://openai.com/codex/

Shopping in ChatGPT just got an upgrade. Now, you can browse products visually, compare options side-by-side, and get detailed, up-to-date information—all in one place. This builds on what ChatGPT does best: helping you figure out what to buy. Under the hood, we’re improving speed, relevance, and product coverage—so results are more up-to-date and more useful. That means less searching, fewer tabs, and faster decisions. To power this, we’re expanding the Agentic Commerce Protocol (ACP) to support product discovery—bringing more complete, relevant, and up-to-date information directly into ChatGPT. For merchants, using ACP means their catalog is represented with the freshest data possible—improving how products show up, how they rank, and how well they convert. These updates are rolling out to all ChatGPT free, Go, Plus, and Pro users this week, with more to come as we continue to invest in product discovery with ChatGPT. https://openai.com/index/powering-product-discovery-in-chatgpt/

Millions of people use ChatGPT daily to build the things that matter to them. For many home based business women, the gap between a hobby and a business can feel overwhelming. By helping with business planning, marketing strategies, and creative solutioning - ChatGPT supports the small, daily steps that help her bring her handmade sweets to a wider audience. Music : “Koi Ladki Mujhe Kal Raat" [Saregama Music] Credits Director : Bharat Sikka DOP : Kyrztof Trojnar Production House : Ransom film

Identifying a gap in the market was the first step - building a business to fill it was the challenge. To navigate the complexities of manufacturing and distribution, ChatGPT helps break down the roadmap of a new venture. From sourcing materials to planning for scale, it supports the daily decisions that bridge the gap between a better solution and the people who need it. Music : "Mere Sapnon Ki Rani" [Saregama Music] Credits Director : Bharat Sikka DOP : Kyrztof Trojnar Production House : Ransom film

@potato4d が、@basara669 と一緒に State of LY 2025 について語りました。 State of LY 2025 LINEヤフー社内で毎年実施しているフロントエンドサーベイ「State of LY」の2025年版。昨年から規模を拡大し、フロントエンドだけでなくバックエンド・iOS・Androidまでカバーする形で実施。回答者数は約350人。 公式レポート: 近日公開! 注目トピック AI・Vibe コーディングの浸透 2024年版では AI 関連の質問が1問のみだったのに対し、2025年版では6問に拡大。特に Vibe コーディングの浸透率が顕著で、回答者の半数以上が業務の半分以上を Vibe コーディングで行っていると回答。約15%はほぼ Vibe コーディングのみという結果に。一方、「まったくしたことがない」「ほぼしない」という層も3割近く存在し、普及余地が可視化された。 AIの情報源については社内 Slack チャンネルが最多という結果で、社内の情報共有文化の活発さが裏付けられた形に。 フレームワーク・スタイリングの傾向 旧 LINE では Vue.js 文化が強かったが、旧 YJ では React 文化があり、現在は全体トレンドとして React がシェア上位に。スタイリングは回答が分散しており、社内での指針・ドキュメントの整備が今後の課題として浮かび上がった。 サーベイ実施の裏側 もともと YJ 側でも実施したかった企画。合併を機に旧 LINE のサーベイ文化を組み合わせて始動。設計の動機は「デザインシステムの技術選定に根拠を持ちたい」「外部登壇時に自社技術を答えられるようにしたい」という実利的なニーズ。現在は新人研修の技術選定にも活用されている。 ゲスト紹介 @basara669(森本さん) LINEヤフー ローカルUGC SBU 新UGC開発ユニット所属、知恵袋担当プロダクトリード ウェブ技術教育ワーキンググループ リード State of LY 2025 主担当 関連リンク State of LY 2025 テックブログ: 近日公開! State of LY 2024 テックブログ: https://techblog.lycorp.co.jp/ja/20250210a UIT INSIDE ep.168 (State of LY 2024 回): https://uit-inside.linecorp.com/episodes/168

Yusuke Kaji, GM of AI for Business at Rakuten, shares how Codex is enabling his team. Check out the full video: https://www.youtube.com/watch?v=gZQQR_tDGuM

第 200 回のテーマは 2026 年 3 月の Monthly Ecosystem です。

Healthcare systems around the world are under strain, and both patients and clinicians are feeling the impact. OpenAI's Head of Health Dr. Nate Gross and Karan Singhal, who leads Health AI Research, discuss how AI can help address the biggest challenges. They cover how OpenAI is training models to handle sensitive health questions in collaboration with physicians, and how that foundation is unlocking a new generation of tools for patients, clinicians, and healthcare systems. Chapters: 00:00:38 – Origins of Nate and Karan’s interest in AI and healthcare 00:05:01 – Strategy for building AI tools for clinicians 00:06:57 – How AI models are trained for health use cases 00:10:15 – How OpenAI is able to score well on health evals 00:14:21 – Key challenges deploying AI in healthcare 00:21:05 – Collaboration with hospitals and healthcare systems 00:23:05 – Practical everyday uses of AI health assistants 00:26:43 – Biggest “wow” moment during development 00:28:46 – Feedback from clinicians and early users

この記事では、リモートコーディングエージェントについて解説しています。リモートコーディングエージェントとは何か、なぜそれが必要なのか、そしてCursor CloudやClaude Code、古いラップトップなど、さまざまな方法でそれらを実行する方法について説明しています。具体的な使用例として、ウェブサイトのデータ処理、リサーチアシスタント、旅行エージェントなどが挙げられています。また、リモートエージェントがどこでどのように動作するか、CLIやユーザーインターフェース、リモート開発環境の設定、APIキーの管理についても触れています。最後に、DIYエージェントやカスタムソリューションの作成についても言及されています。 • リモートコーディングエージェントの定義と必要性 • 具体的な使用例(データ処理、リサーチアシスタント、旅行エージェント) • リモートエージェントの実行環境と方法 • CLIやユーザーインターフェースの種類 • リモート開発環境の設定方法 • APIキーの管理とアクセス方法 • DIYエージェントやカスタムソリューションの作成方法

We’re introducing Skills in beta for ChatGPT Business and Enterprise. Skills are reusable, shareable workflows that tell ChatGPT exactly how to do a specific task, enabling ChatGPT to do that task better and more consistently. A skill can bundle instructions, examples, and even code. Once a skill has been created and installed, ChatGPT can automatically use a skill (or multiple skills) when it’s helpful. A skill can include instructions and supporting resources you want ChatGPT to use whenever you ask it to do a specific task. And for more structured work, skills can include a set of steps that run the same way every time. Skills are available in beta in ChatGPT Business and Enterprise. Skills are also supported in Codex and the API. While they don’t sync across products yet, OpenAI skills follow the Agent Skills open standard—so you can download them from one product and install them in another.

Rakuten operates one of the world’s largest digital ecosystems across e-commerce, fintech, and mobile services. Shipping fast without sacrificing reliability is critical. Teams use OpenAI Codex to support incident response, code review, and development workflows. The agent works inside monitoring systems and CI/CD pipelines to diagnose issues, review changes against internal standards, and advance projects from partial specifications. The result is faster recovery and faster shipping. Rakuten reports up to a 50% reduction in mean time to recovery, and projects that once took a quarter can now ship in weeks. As Codex handles more generation, engineers focus on clearer specifications and rigorous verification. The shift helps teams move quickly while keeping safety built into every release. Learn more: https://openai.com/index/rakuten/

We sat down with Yusuke Kaji, General Manager of AI for Business at Rakuten, to hear how Codex is empowering his engineers, reducing app vulnerabilities, and driving real impact across revenue streams. Check out the full video in our playlist: https://youtu.be/gZQQR_tDGuM

Build agent-powered workflows for real engineering work with Codex and OpenAI APIs. In this Build Hour, you'll learn how teams are moving beyond pair programming to agentic delegation, where AI systems can take on entire engineering tasks from planning to execution. Charlie Guo (DevEx), Ryan Lopopolo (Future of Work), Mitch Troyanovsky (Cofounder, Basis) cover how to: • Ship new features faster using Codex, with real examples from Basis: https://www.getbasis.ai/ • Build agent-driven systems using new API capabilities like hosted shell, skills, and websockets • Evaluate and improve agents using the Agent Legibility Score framework • Apply Harness Engineering techniques to make agent workflows reliable in production • Use GPT-5.4 for large-context reasoning, computer-use tasks, and tool-based agent workflows • Reduce manual overhead by turning complex logic into reusable agent-driven flows 👉 Codex docs: https://developers.openai.com/codex 👉 API docs: https://developers.openai.com/api/docs 👉 Harness Engineering Blog: https://openai.com/index/harness-engineering/ 👉 GPT-5.4 Blog: https://openai.com/index/introducing-gpt-5-4/ 👉 Follow along with the code repo: http://github.com/openai/build-hours 👉 Sign up for upcoming live Build Hours: https://webinar.openai.com/buildhours 00:00 Introduction 01:58 What's New: Codex 05:01 What's New: API 08:33 Demo: Agent Legibility Score 21:53 Harness Engineering 32:32 Customer Spotlight: Basis 47:57 Q&A

The Codex app is now on Windows. Get the full Codex app experience on Windows with a native agent sandbox and support for Windows developer environments in PowerShell. https://developers.openai.com/wendows

In ChatGPT, GPT‑5.4 Thinking can now provide an upfront plan of its thinking, so you can adjust course mid-response while it’s working, and arrive at a final output that’s more closely aligned with what you need without additional turns.

OpenAI researcher SQ Mah explains how GPT-5.4 Thinking brings even more powerful capabilities to Codex — with more persistent computer-use (CUA) capabilities that cut token usage by two-thirds in some cases, and stronger image understanding for seamless website UI and image generation.

OpenAI researcher Josh McGrath explains how GPT-5.3 Instant’s responses when using web search are more contextual, relevant, and stylistically natural.

OpenAI researcher Blair Chen explains how GPT-5.3 Instant reduces unnecessary disclaimers, making ChatGPT more directly helpful and smoother to use.

The PodRocket panel is back for their February roundup! Paige, Paul, Jack and Noel dig into the biggest stories reshaping the web development landscape right now. The panel kicks off with a deep dive into OpenClaw, it's transition to a foundation, and Peter Steinberger joining OpenAI. Is a foundation the right long-term home for fast-moving AI projects? And what does the continuing flow of talent into big AI labs mean for the open source ecosystem? From there, the conversation shifts to the browser's changing role in the web, how the lines between native and web experiences continue to blur, and what that means for developers building for the future. The panel also tackles growing pressures on open source sustainability and the widening gap between developers who are deeply integrating AI agents into their workflows and everyone else who hasn't even heard of these tools yet.

Watch the full episode: https://www.youtube.com/watch?v=9jgcT0Fqt7U

Watch the full episode: https://www.youtube.com/watch?v=9jgcT0Fqt7U

Watch the full episode: https://www.youtube.com/watch?v=9jgcT0Fqt7U

Builders Unscripted spotlights the stories behind real projects and the mindset that makes them possible: you can just build things. Prior to joining OpenAI, Peter Steinberger sat down with Romain Huet, Head of Developer Experience, to talk about OpenClaw, his journey in open source, and how he builds with Codex.

"The Codex app lets you go further, do more in parallel, and go deeper on the problems you care about." -gdb

Gil Fink breaks down web rendering patterns including server side rendering, SSR, client side rendering, CSR, and static rendering, along with newer approaches like islands architecture, resumability, and hybrid rendering. The conversation explores tradeoffs around hydration, web performance, INP, CDN caching, and bundle size optimization, and compares frameworks like Next.js, TanStack Start, Astro, Qwik, and Remix to help developers make better decisions about React rendering strategies and overall application performance.

Build faster, cheaper, and with lower latency using prompt caching. This Build Hour breaks down how prompt caching works and how to design your prompts to maximize cache hits. Learn what’s actually being cached, when caching applies, and how small changes in your prompts can have a big impact on cost and performance. Erika Kettleson (Solutions Engineer) covers: • What prompt caching is and why it matters for real-world apps • How cache hits work (prefixes, token thresholds, and continuity) • Best practices like using the Responses API and prompt_cache_key • How to measure cache hit rate, latency, and token savings • Customer Spotlight: Warp (ttps://www.warp.dev/) led by Suraj Gupta (Team Lead) to explain the impact of prompt caching 👉 Prompt Caching Docs: https://platform.openai.com/docs/guides/prompt-caching 👉 Prompt Caching 101 Cookbook: https://developers.openai.com/cookbook/examples/prompt_caching101 👉 Prompt Caching 201 Cookbook: https://developers.openai.com/cookbook/examples/prompt_caching_201 👉 Follow along with the code repo: http://github.com/openai/build-hours 👉 Sign up for upcoming live Build Hours: https://webinar.openai.com/buildhours 00:00 Introduction 02:37 Foundations, Mechanics, API Walkthrough 12:11 Demo: Batch Image Processing 16:55 Demo: Branching Chat 26:02 Demo: Long Running Compaction 32:39 Cache Discount Pricing Overview 36:03 Customer Spotlight: Warp 49:37 Q&A

この記事では、WebMCPという新しい標準について説明されています。WebMCPは、AIがウェブサイトとインタラクションするための構造化されたツールを提供し、従来の遅いボットスタイルのクリック操作を排除します。ScottとWesは、WebMCPの機能やブラウザ統合について議論し、命令型APIと宣言型APIの違いについても触れています。また、WebMCPの利点やトークン効率についても言及されており、これがウェブにおけるAIの重要な瞬間になる可能性があると述べています。 • WebMCPはAIがウェブサイトと効率的にインタラクションするための新しい標準である。 • 従来のボットスタイルのクリック操作を排除し、構造化されたツールを使用する。 • 命令型APIと宣言型APIの違いについて議論されている。 • WebMCPのブラウザ統合が可能である。 • トークン効率が向上し、AIの利用が促進される。

Make that idea happen, no matter how wild it seems, with ChatGPT 🎧: The Letter Live at The Fillmore by Joe Cocker

Sharpen your skills and hit the road with ChatGPT. 🎧: Brain by The Action

Russ Miles joins the show to unpack why developer platforms fail and how to rethink platform engineering through the lens of flow of value rather than factory-style developer productivity metaphors. Russ explains why every organization already has an internal developer platform, and why treating it as platform as a product changes everything. The conversation explores cognitive load and cognitive burden, how to design around strong feedback loops, and why the OODA loop mindset helps teams make better decisions closer to development time. They discuss the risks of overloading pipelines and CI/CD systems, the tension between shipping fast and handling security vulnerabilities in a regulated environment, and how to “shift left” without simply dumping responsibility onto developers. Drawing on lessons from Rod Johnson, the Spring Framework, TDD, and modern software engineering as described by Dave Farley, Russ reframes platforms as systems that support experimentation through the scientific method. The episode also touches on AI assisted coding, developer focus, and how thoughtful developer experience and DX surveys can prevent burnout while improving value delivery.

Javi walks through a logging refactor and shows why Codex's self-verification is a step change: the model runs the app, finds the right session, and proves logs still flow. Takeaways: - Codex can validate its work by running tests and launching the app. - It excels at broad refactors that touch many files. - The model can find session IDs and query tools on its own. - Verification collapses a risky manual loop into minutes. When the agent can prove correctness, you can move faster with less risk. Chapters: 00:00 Why Codex has been a step change 00:18 Self-verification: run tests and launch the app 00:52 The task: a logging refactor across many files 01:10 The risk: do not break observability 01:28 How this used to be verified manually 01:35 Ask the model to verify logs end-to-end 01:50 It finds the session ID and queries logs MCP 02:03 Proof: logs still pipe, task done fast

Deep research in ChatGPT is now powered by GPT-5.2. Now in deep research you can: - Connect to apps in ChatGPT and search specific sites - Track real-time progress and interrupt with follow-ups or new sources - View fullscreen reports Rolling out starting today. https://chatgpt.com/features/deep-research/

Alexander Embiricos (a Product Manager on the Codex team) shows how he uses Codex skills to make a small product change, diagnose a Buildkite failure, and improve the skills so the next PR goes faster. Takeaways: - Skills are a shortcut for repeated workflows like Buildkite logs. - When a skill fails, fix the root cause and update the skill. - The real win is compounding: the codebase gets easier over time. This is the loop: ship the fix, then teach the workflow. Chapters: 00:00 PM context: careful before tagging engineers 00:16 A confusing button and a quick team check 00:32 Delete the button, then hit a PR failure 00:46 Use the Buildkite skill instead of digging through logs 01:09 Install the Buildkite token 01:20 Update the skill so it works next time 01:53 Inductive loop: feedback - fix - improve the skill 02:06 Compounding payoff: Codex gets better over time

How should advertising work in an AI product? Asad Awan, one of the ad leads at OpenAI, walks through how the company is approaching this decision and why it’s testing ads in ChatGPT at all. He explains how ads are built to stay separate from the model response, keep conversations with ChatGPT private from advertisers, and give people control over their experience. Chapters 00:00:29 — Mission and principles 00:04:01 — Separation between ads and answers 00:07:31 — Who will see ads 00:08:52 — Internal input and decision-making process 00:11:06 — Controls and how ads will work 00:15:53 — Guardrails for sensitive conversations 00:17:33 — Skepticism about ads 00:20:26 — Helping small businesses 00:24:13 — Future of ads

We build the tools. You build the future. Start building with Codex. https://openai.com/codex/

Joey demonstrates multitasking with Codex worktrees: delegate a drag-and-drop feature in one worktree while continuing local work, then review and apply both PRs. Takeaways: - Worktrees let you delegate tasks and keep moving in parallel. - Keep local momentum while Codex works in the background. - Use quick questions and comments to correct issues mid-flight. - The mindset shift is from line-by-line edits to architecture and flow control. Parallel workflows turn waiting time into progress. Chapters: 00:00 Work trees enable parallelism 00:20 Example feature: reorder pinned tasks 00:33 Kick off drag-and-drop in a work tree 00:52 Keep working locally while Codex runs 01:27 Spot a bug: the branch is created twice 01:53 Provide Figma context and check other PRs 02:06 Multiple PRs finish in parallel 02:23 Apply the drag-and-drop changes 02:40 Review the result 02:47 Mindset shift: architecture over individual lines 03:04 Context switching and good stopping points

Build something that matters with ChatGPT. Director : Bharat Sikka Music : “Ek Din Bik Jayega" by Mukesh, Poornima, Lata Mangeshkar [Saregama Music]

Work on your form and technique with ChatGPT. Director : Bharat Sikka Music : “Aanewala Pal Janewala Hai" by Kishore Kumar [Saregama Music]

Our smartest model got an upgrade. Claude Opus 4.6 plans more carefully, stays on task longer, and works more autonomously, so you can do more with less back-and-forth. Read more: https://anthropic.com/news/claude-opus-4-6

Rich Harris joins the podcast to discuss his talk, fine-grained everything, exploring fine-grained reactivity, frontend performance, and the real costs of React Server Components and RSC payloads. Rich explains how Svelte and SvelteKit approach co-located data fetching, remote functions, and RPC to reduce server-side rendering costs, improve developer experience, and avoid unnecessary performance overhead on mobile networks. The conversation dives into async rendering, parallel async data fetching, type safety with schema validation, and why async-first frameworks may define the future of JavaScript frameworks and web performance.

Across the US, people are using ChatGPT to do more. As the Ortega’s family-run tamale shop in Los Angeles expands, ChatGPT helps them organize schedules, respond to customers, and even spin up a searchable website tracker in a single afternoon—freeing them up to focus on what they’re building together.

No matter what you are building, ChatGPT can help. In Reno, Richard Lane uses ChatGPT to evolve an 86-year-old salvage yard without losing what makes it work. By testing ideas, streamlining decisions, and bringing his team along, ChatGPT makes it possible for one of the fastest metal shops in Reno to keep thriving.

Millions of people daily use ChatGPT to build the things that matter to them. In South Carolina, the Sharps rely on ChatGPT to help run their fourth-generation family farm. From planning to problem-solving, it supports the everyday decisions that help a local operation thrive.

Ed Bayes from the Codex team shows how the Codex app pairs with Figma out of the box: prompt with a Figma link and have a working prototype in minutes. Takeaways: - One-click install for Figma with the Figma skill. - Pasting a Figma link is enough to kick off a strong first pass. - Codex can pull from your design system and get 80-90% there. - Interactive prototypes are key for building dynamic behavior. Design-to-code is faster, and AI UX gets easier to stress test. Chapters: 00:00 One-click MCP install with Figma out of the box 00:14 Paste a Figma link into Codex 00:38 Watch hot reload progress in real time 00:53 Compare against the original design system 01:02 Polish the last 10-20% 01:25 Why this is faster for AI-driven UI 01:36 Stress test with interactive prototypes

Ads are coming to AI. But not to Claude. Keep thinking. Read more about why: https://www.anthropic.com/news/claude-is-a-space-to-think

Ads are coming to AI. But not to Claude. Keep thinking. Read more about why: https://www.anthropic.com/news/claude-is-a-space-to-think

Ads are coming to AI. But not to Claude. Keep thinking. Read more about why: https://www.anthropic.com/news/claude-is-a-space-to-think

Ads are coming to AI. But not to Claude. Keep thinking. Read more about why: https://www.anthropic.com/news/claude-is-a-space-to-think

Andrew from the Codex engineering team shows how he uses automations in the Codex app to take care of the least fun parts of his job. In this walkthrough, you'll see automations that: - Summarize yesterday's commits into a morning pulse - Upskill Codex overnight by fixing skills and scripts - Update personalization and AGENTS.md to reduce misunderstandings - Triage top Sentry issues with memory across runs - Keep PRs green by fixing CI failures and resolving merge conflicts These automations run on a schedule, carry context forward, and help engineers stay focused on the work that needs their attention the most. Chapters: 00:00 Automating the "unfun" work 00:18 Morning commit pulse 00:47 Upskill: improve skills overnight 01:20 AGENTS.md updates 01:48 Sentry triage automation 02:55 Merge conflicts and CI pain 03:22 Keeping PRs green 04:05 Auto-fixing CI and conflicts

The Codex app is a powerful command center for building with agents. • Multitask effortlessly: Work with multiple agents in parallel and keep agent changes isolated with worktrees • Create & use skills: package your tools + conventions into reusable capabilities • Set up automations: delegate repetitive work to Codex with scheduled workflows in the background Download for macOS here - https://openai.com/codex Windows support coming soon.

When you look at scientific tooling, a lot of it hasn’t changed in decades. That’s we recently launched Prism: a free, AI-native environment for scientific writing and collaboration, designed to mean less time in your editor and more time doing research. Physicist & Research Scientist Alex Lupsasca joins Kevin Weil (VP, OpenAI for Science) and Victor Powell (Product, Prism) to walk through what it looks like when ChatGPT works inside a LaTeX project with full paper context. You’ll see Prism: • polish writing with reviewable edits • generate a clean diagram from a whiteboard photo • spin up multiple chat threads to tackle citations and math checks in parallel Explore Prism and try it on your next draft: https://prism.openai.com

Introducing the Codex app—now available on macOS The Codex app is a powerful command center for building with agents. • Multitask effortlessly: Work with multiple agents in parallel and keep agent changes isolated with worktrees • Create & use skills: package your tools + conventions into reusable capabilities • Set up automations: delegate repetitive work to Codex with scheduled workflows in the background Available starting today on macOS with Windows coming soon. And for a limited time, Codex is available through ChatGPT Free and Go subscriptions—and we’re doubling rate limits for Plus, Pro, Business, Enterprise, and Edu users—across the Codex app, CLI, IDE extension, and cloud. Download the app → openai.com/codex

On December 8, the Perseverance rover safely trundled across the surface of Mars. This was the first AI-planned drive on another planet. And it was planned by Claude. Engineers at NASA Jet Propulsion Laboratory used Claude to plot out the route for Perseverance to navigate an approximately four-hundred-meter path on the Martian surface. Read the full story: https://www.anthropic.com/mars

It’s great to be back behind the mic! In this episode of JavaScript Jabber, I’m joined by Dan Shapir and our guest Jack Harrington from Netlify and TanStack

In this mini-panel, Jack, Paige, Paul, and Noel discuss how AI reshaping developer tooling is impacting open source monetization, including the recent Tailwind layoffs and the collapse of Tailwind documentation traffic caused by AI. The conversation expands into broader developer tooling business models and reacts to claims like Ryan Dahl stating that the era of humans writing code is over. They also cover the Astro Cloudflare acquisition, what it means for the Cloudflare developer platform, and how this shapes the frontend frameworks future. Hot takes include light mode vs dark mode SaaS, shifting developer aesthetics, and why AI productivity for developers may now come down to workflow design rather than raw coding skill.

この記事では、Wes BosとScott TolinskiがAIを活用した超特化型の個人ソフトウェアの構築について議論しています。彼らは、個人エージェントやホームオートメーション、JSONをデータベースとして使用する方法、そして大規模言語モデル(LLM)がどのように迅速でカスタムなアプリを実現し、膨大なSaaSを置き換えるかについて探求しています。また、ClawdbotプロジェクトがMoltbotに改名されたことにも言及しています。 • AIエージェントを使用して、個人向けの超特化型アプリを構築する方法を探る。 • JSONをデータベースとして利用する手法を紹介。 • 大規模言語モデル(LLM)が迅速でカスタムなアプリを実現する方法を解説。 • 膨大なSaaSを置き換える可能性について言及。 • プライバシーに関する考慮事項も取り上げられている。

As AI begins to meaningfully accelerate scientific discovery, we’re taking an early step to reduce friction in day-to-day research work with Prism. Prism is a free workspace for scientists to write and collaborate on research, powered by GPT-5.2. Prism offers unlimited projects and collaborators in a single, cloud-based, LaTeX-native workspace, and is designed to expand access to scientific tools. By reducing version conflicts, manual merging, and mechanical overhead, Prism helps teams spend less time managing files and more time engaging with the substance of their work. We’re excited to learn from researchers using Prism today and to continue building toward tools that help science move faster — together. Prism is now available on the web to anyone with a ChatGPT personal account. Coming soon to ChatGPT Business, Team, Enterprise, and Education plans. Try today: openai.com/prism

Sam Altman answers questions and discusses the future of AI with builders from across the AI ecosystem.

Your connected tools are now interactive inside Claude. Manage projects in Asana, draft messages in Slack, build charts in Amplitude, and create diagrams in Figma—without switching tabs.

この記事では、Kent C. DoddsがMCP(Model Context Protocol)とコンテキストエンジニアリングについて解説し、AI駆動のツールを効果的に構築するために必要な要素を探ります。具体的な実例やUIパターン、パフォーマンスのトレードオフについて議論し、ウェブの未来がチャットにあるのかブラウザにあるのかを考察します。MCPの最適化や効率化の手法、MCP UIの重要性、MCPサーバーの開発フローについても触れています。最終的に、MCPの構築におけるHTMLの返却やレンダリングのタイミング、ツールの呼び出し方についても説明されています。 • MCP(Model Context Protocol)とコンテキストエンジニアリングの重要性を解説 • AI駆動のツールを構築するための具体的な実例を紹介 • MCPの最適化や効率化の手法について議論 • MCP UIの重要性とその構築方法を説明 • ウェブの未来がチャットかブラウザかについて考察

Build a new generation of apps you can chat with right inside ChatGPT using Codex. In this Build Hour, we walkthrough how to design, build, and improve a realtime multi-player ChatGPT app from first setup to advanced UI patterns. Corey Ching (Developer Experience) covers: • How Apps in ChatGPT work and how to build them with Codex • Using the Apps SDK, docs MCP server, and developer mode • Improving an app with UI/UX guidelines • Best practices & live Q&A 👉 OpenAI Apps SDK: https://developers.openai.com/apps-sdk 👉 What makes a great ChatGPT app: https://developers.openai.com/blog/what-makes-a-great-chatgpt-app/ 👉 Follow along with the code repo: http://github.com/openai/build-hours 👉 Sign up for upcoming live Build Hours: https://webinar.openai.com/buildhours 00:00 Introduction 02:43 Apps Platform 04:07 Demo of Apps in ChatGPT 10:44 What is an App in ChatGPT 13:58 Building an App Process 16:58 Creating an App with Codex 26:57 Demo of Realtime Ping-Pong App 34:01 Best Practices: Iterating on your App 41:52 Q&A

Codex now works in JetBrains IDEs. In this quick tour with Gleb Melnikov from JetBrains, we use Codex on a real Kotlin multi-platform project to: 🐞 Debug a broken iOS build from a stack trace 🌍 Implement Spanish localization end-to-end 🔄 Stay in-flow inside the IDE while the agent researches, edits, and verifies changes Sign in with your ChatGPT subscription, an API key, or your JetBrains AI subscription to collaborate with Codex directly in your JetBrains IDE. Chapters: 00:00 Codex is now available in JetBrains 00:53 Sign in options + codebase tour 01:48 Fixing an iOS build error with Codex 04:11 Adding Spanish localization 05:08 Access modes 07:18 Working in parallel + self-verifying with builds 09:21 Works across JetBrains IDEs + wrap-up

Michael Hladky joins the pod to explain how CSS performance improvements like content-visibility, CSS containment, contain layout, and contain paint can dramatically outperform JavaScript virtual scrolling. The conversation explores virtual scrolling, large DOM performance, and how layout and paint work inside the browser rendering pipeline, including recalculate styles and their impact on INP Interaction to Next Paint. Michael shares real-world examples of frontend performance optimization, discusses cross-browser CSS support including Safari content-visibility, and explains why web performance issues tied to rendering are often misunderstood and overlooked.

OpenAI CFO Sarah Friar and Khosla Ventures founder Vinod Khosla argue the greatest challenges in AI right now are keeping up with demand and making sure more people get the benefit. They unpack what's driving big investments in compute and why this moment is different from other technology cycles — with meaningful advances in health, agents, and robotics still ahead. Chapters 00:00:00 — What’s the AI story of 2026? 00:07:28 — AI in healthcare 00:12:01 — Scaling compute to match revenue 00:18:05 — Difference between now and dot-com bubble 00:27:41 — Ads in ChatGPT 00:30:05 — Will consumers have more than one AI subscription? 00:36:41 — Winning in enterprise 00:39:44 — How can startups succeed? 00:44:05 — Robotics and beyond

この記事では、Stack Overflowの衰退とAIがソフトウェア開発に与える影響について議論されています。ScottとWesは、FirefoxのAIに関する選択肢やAppleのブラウザエンジンの変更、新しいツールやドキュメント、ブラウザのアップデートについても触れています。特に、Stack Overflowが公式に「死んだ」とされる理由や、AIが開発プロセスにどのように影響を与えるかについて詳しく解説しています。また、Micro QuickJSやOpen Workersといった新しい技術や、React Ariaの新しいドキュメントについても言及されています。最後に、Chromeの新しい権限ダイアログやJSONの可読性向上についても触れています。 • Stack Overflowが公式に衰退したとされる理由 • AIがソフトウェア開発に与える影響 • FirefoxのAIに関する選択肢 • Appleのブラウザエンジンの変更 • 新しいツールやドキュメントの紹介 • Chromeの新しい権限ダイアログの導入 • JSONの可読性向上に関する提案

Starting today, ChatGPT Go is rolling out everywhere ChatGPT is available. In the US, Go is available for $8* per month. ChatGPT Go is designed for people who want expanded access to our latest model, GPT‑5.2 Instant, at a lower price point—more messages, more uploads, and more image creation. With ChatGPT Go, you get: 10x more messages, file uploads and image creation than the free tier, so you can keep chatting with no limits on GPT‑5.2 Instant. Longer memory and context window, so ChatGPT can remember more helpful details about you over time. *US price displayed. Go pricing is localized in some markets.

In this episode of PodRocket, Daniel Thompson--Yvetot joins us to break down what’s new in Tauri 2.0 and how developers are using the Tauri framework to build desktop and mobile apps with Rust and JavaScript. We discuss how Tauri lets developers use frameworks like React, Vue, and Angular for the UI while handling heavy logic in Rust, resulting in smaller app binaries and better performance than Electron alternatives. The conversation covers Create Tauri App for faster onboarding, the new plugin system for controlling file system and OS access, and how Tauri improves app security by reducing attack surfaces. They also dive into mobile app development, differences between system WebViews, experiments with Chromium Embedded Framework, and why cross platform apps still need platform-specific thinking. Daniel also shares what’s coming next for Tauri, including flexibility in webviews, accessibility tooling, compliance requirements in Europe, and the roadmap toward Tauri 3.0.

AI is ubiquitous on college campuses. We sat down with students to hear what's going well, what isn't, and how students, professors, and universities alike are navigating it in real time. 0:00 - Introduction 0:22 - Meet the panel 1:06 - Vibes on campus 6:28 - What are students building? 11:27 - AI as tool vs. crutch 16:44 - Are professors keeping up? 20:15 - Downsides 25:55 - AI and the job market 34:23 - Rapid-fire questions

Cowork brings Claude Code’s agentic capabilities to the Claude desktop app. Give Claude access to a folder, set a task, and let it work. It loops you in along the way. Try it at claude.com/download.

We’re introducing ChatGPT Health, a dedicated experience that securely brings your health information and ChatGPT’s intelligence together, to help you feel more informed, prepared, and confident navigating your health. With Health, ChatGPT can help you understand recent test results, prepare for appointments with your doctor, get advice on how to approach your diet and workout routine, or understand the tradeoffs of different insurance options based on your healthcare patterns. Join the waitlist: https://chatgpt.com/health/waitlist

Get started with Codex, OpenAI’s coding agent, in this step-by-step onboarding walkthrough. You’ll learn how to install Codex, set up the CLI and VS Code extension, configure your workflow, and use Agents.md and prompting patterns to write, review, and reason across a real codebase. This video covers: Installing Codex (CLI + IDE) Setting up a repo and getting your first runs working Writing a great Agents.md (patterns + best practices) Configuring Codex for your environment Prompting patterns for more consistent results Tips for using Codex in the CLI and IDE Advanced workflows: headless mode + SDK Start here Sign up: https://openai.com/codex/ Codex overview + docs: https://developers.openai.com/codex Codex Cookbook: https://cookbook.openai.com/topic/codex Install + setup VS Code extension: https://marketplace.visualstudio.com/items?itemName=openai.chatgpt Agents.md standard: https://agents.md Agents.md repo: https://github.com/agentsmd/agents.md Prompting + workflows Prompting guide: https://developers.openai.com/codex/prompting/ Exec plans (Agents.md patterns): https://cookbook.openai.com/articles/codex_exec_plans Config reference: https://github.com/openai/codex/blob/main/docs/config.md#config-reference Updates Changelog: https://developers.openai.com/codex/changelog Releases: https://github.com/openai/codex/releases

Set goals and stick to them all year with ChatGPT.

Liz used ChatGPT throughout her teenage son’s cancer treatment to translate reports, prepare questions, and have more informed conversations with doctors.

Anthropic researcher Amanda Askell discusses the self-knowledge problem that AI models face.

Paul sits down with Mark Techson to break down Angular v21. They explore how Angular signals power new features like Angular signal forms, improve scalability, and simplify state management. The conversation dives deep into Angular AI tooling, including the Angular MCP server, Angular AI tutor, and the Angular Gemini CLI extension, explaining how Angular is adapting to modern AI-first developer workflows. Mark also shares how Angular Aria introduces Angular headless components with built-in Angular accessibility, reshaping UX collaboration. The episode wraps with updates on using Vitest with Angular, and performance features like Angular defer syntax and Angular incremental hydration.

We’re introducing ChatGPT Health, a dedicated experience that securely brings your health information and ChatGPT’s intelligence together, to help you feel more informed, prepared, and confident navigating your health. When you choose to connect your health data, such as medical records or wellness apps, your responses are grounded in your own health information. You can also connect your Apple Health information and other wellness apps, such as Function, MyFitnessPal, Peloton. Apps may only be connected to your health data with your explicit permission, even if they’re already connected to ChatGPT for conversations outside of Health. And you’re always in control: disconnect an app at any time and it immediately loses access.

We’re introducing ChatGPT Health, a dedicated experience that securely brings your health information and ChatGPT’s intelligence together, to help you feel more informed, prepared, and confident navigating your health. Health conversations feel just like chatting with ChatGPT—but grounded in the information you’ve connected. You can upload photos and files and use search, deep research, voice mode and dictation. When relevant, ChatGPT can automatically reference your connected information to provide more relevant and personalized responses. For example, you might ask: “How’s my cholesterol trending?” or “Can you summarize my latest bloodwork before my appointment?”

We’re introducing ChatGPT Health, a dedicated experience that securely brings your health information and ChatGPT’s intelligence together, to help you feel more informed, prepared, and confident navigating your health. With Health, ChatGPT can help you understand recent test results, prepare for appointments with your doctor, get advice on how to approach your diet and workout routine, or understand the tradeoffs of different insurance options based on your healthcare patterns. Join the waitlist: https://chatgpt.com/health/waitlist

We’re introducing ChatGPT Health, a dedicated experience that securely brings your health information and ChatGPT’s intelligence together, to help you feel more informed, prepared, and confident navigating your health. Health is designed to support, not replace, medical care. It is not intended for diagnosis or treatment. Instead, it helps you navigate everyday questions and understand patterns over time—not just moments of illness—so you can feel more informed and prepared for important medical conversations. Join the waitlist: https://chatgpt.com/health/waitlist

Burt uses ChatGPT to navigate life with two forms of cancer, supporting him in understanding scans, preparing for appointments, and explaining complex medical information to family.

Living with heart failure, Steve uses ChatGPT to carry out his doctor’s care plan by tracking his diet, medications, and inflammation.

As a mom of two toddlers, Lauren uses ChatGPT to find time for herself through flexible workouts that fit into the unpredictable rhythms of her busy life.

By rolling out ChatGPT Enterprise across its workforce, CBA is improving how teams work and deliver better outcomes for customers. Hear more from CEO Matt Comyn in this short video.

See how AI literacy scales across the enterprise when learning is built into the work. 20,000 BNY employees have created their own agents to build and update learning content. Get the full story at https://openai.com/index/bny/

See how AI gives teams more time for what matters most: BNY's clients. With deep research, advisors can cut planning time by 60% and use that time to deliver even more relevant and timely client experiences. Get the full story at https://openai.com/index/bny/

ChatGPT helps members of BNY's Legal team cut contract review time by up to 75%. Get the full story at https://openai.com/index/bny/

Leaders at BNY share how they put AI directly into the hands of employees across the firm, powering Eliza 2.0 and enabling secure, responsible AI at scale. Get the full story at https://openai.com/index/bny/

Every day, millions of people ask ChatGPT about their health – from breaking down medical information, preparing questions for their doctor’s appointments, to helping people manage their overall wellbeing.

In this panel episode, the crew discusses AI platform consolidation, open-source sustainability, and the future of web development. We break down Anthropic’s acquisition of Bun, what it means for the JavaScript ecosystem, and whether open-source projects can remain independent as AI companies invest heavily in infrastructure. We also discuss Zig leaving GitHub, growing concerns around AI-first developer tools, npm security vulnerabilities, and supply-chain risk in modern software. The episode wraps with hot takes on AI infrastructure costs, developer productivity, and practical advice for engineers navigating today’s rapidly changing tech landscape.

![Building Jarvis: MCP and the future of AI with Kent C Dodds [REPEAT]](https://media24.fireside.fm/file/fireside-images-2024/podcasts/images/3/3911462c-bca2-48c2-9103-610ba304c673/episodes/9/90da0a21-a74d-4e5a-bdde-f2631702a1ae/cover_medium.jpg?v=1)

In this repeat episode, Kent C. Dodds came back on to the podcast with bold ideas and a game-changing vision for the future of AI and web development. In this episode, we dive into the Model Context Protocol (MCP), the power behind Epic AI Pro, and how developers can start building Jarvis-like assistants today. From replacing websites with MCP servers to reimagining voice interfaces and AI security, Kent lays out the roadmap for what's next, and why it matters right now. Don’t miss this fast-paced conversation about the tools and tech reshaping everything.

Turn your hard work into something big, with ChatGPT. 🎧: “Ajib Dastan Hai Yeh” by Lata Mangeshkar [Saregama Music]

Learn what AI researchers mean when they talk about sycophancy, when it's more likely to show up in conversations, and tactics you can use to steer AI towards truth.

See Claude for Chrome handle three complete workflows in your browser. Pull data from dashboards into one analysis doc Address slide comments automatically Build with Claude Code, test in Chrome Claude for Chrome is a browser extension that lets Claude see, click, type, and navigate web pages. Try it: claude.com/chrome

For a large part of 2025, we ran Project Vend: an experiment where we let Claude manage a small business in the Anthropic office. We learned a lot from how close it was to success—and the curious ways that it failed—about the plausible, strange, not-too-distant future in which AI models might autonomously run things in the real economy. The shopkeeper (who we named Claudius) had to source products, set prices, manage inventory, and deal with customers. Things got really, really weird. Read more about the experiment: https://www.anthropic.com/research/project-vend-2 0:00 Background on Project Vend 0:35 How a transaction works 1:27 Claudius's naïveté 2:29 An identity crisis 3:57 The CEO agent 5:04 Conclusion

Binti is transforming child welfare by helping social workers license foster and adoptive families faster. With 400,000 children in U.S. foster care, Binti integrated Claude to reduce paperwork from weeks to hours—shrinking approval timelines by 18%.

How is AI affecting education? At Anthropic, we often talk about “holding light and shade”: taking seriously both the benefits and the risks of the AI systems we’re building. In education, that trade-off is especially acute. AI offers the potential to scale up personalized learning, tutoring, and assessment, but it also invites some much more fundamental questions about how (and even what) students should learn. In this video, four Anthropic staff members with deep personal ties to education discuss how they’re navigating this topic—at work and in their own lives, too. 00:24 – Introduction 1:15 – Why is Anthropic focused on this topic? 5:47 – How is AI affecting education today? 9:04 – What is the potential we see in AI for teaching and learning? 13:42 – How should children and teachers approach learning in the age of AI? 21:16 — What work is Anthropic doing in the sector? 31:19 – What are the things we’re still uncertain about? 38:20 – What would the successful incorporation of AI look like?

We’re releasing a new version of ChatGPT Images, powered by our new flagship image generation model. Now, whether you’re creating something from scratch or editing a photo, you’ll get the output you’re picturing. It makes precise edits while keeping details intact, and generates images up to 4x faster. Alongside, we’re introducing a new Images feature within ChatGPT, designed to make image generation delightful—to spark inspiration and make creative exploration effortless. The new Images model and feature are rolling out today in ChatGPT for all users, and in the API as GPT Image 1.5. Try it at: https://chatgpt.com/images/

What does it take to truly understand how AI models think? Anthropic researcher Amanda Askell shares what it means to be an “LLM whisperer.”

Anthropic's Stuart Ritchie speaks with co-creator David Soria Parra about the development of the Model Context Protocol (MCP), an open standard to connect AI to external tools and services—and why Anthropic is donating it to the Linux Foundation. 00:00 - What is MCP? 01:21 - The problem MCP solves 02:46 - The USB-C analogy 03:45 - How MCP began 05:36 - What makes MCP different 08:05 - Community adoption 09:54 - Standards without mandates 11:05 - From Anthropic hackathon to Hacker News 13:37 - The decision to open source 15:18 - Donating MCP to the Linux Foundation 17:27 - The Agentic AI Foundation 20:34 - Criticisms of MCP 28:21 - The future of MCP 30:58 - What have people built with MCP? 32:53 - Advice for non-developers 34:58 - What David is most proud of

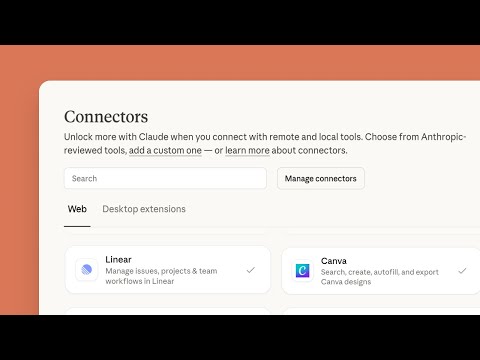

Learn how to supercharge Claude by connecting the tools you already use. This video shows you how to set up connectors that give Claude access to your files, apps, and workflows.

Amanda Askell explains what a philosopher is doing at Anthropic.

Inside an 86-year-old steel yard in Reno, manager Richard Lane shows how a legacy business stays alive by adapting: fixing what breaks, improving what he can, and bringing his team along. Richard runs the yard the way his predecessors did: hands-on, practical, and rooted in community. But the work has changed. Machines break. Orders pile up. The next generation needs training. In a place that once ran on paper and memory, Richard now uses ChatGPT as a second set of hands, helping him troubleshoot failing machines, build faster processes, and mentor his crew in real time. And now? It just might be the fastest metal shop in Reno. Learn how ChatGPT is helping a small, decades-old business stay efficient in a job where something always needs fixing.

Inside a 4th generation seed farm in South Carolina, the Sharp family shows how a long-running operation adapts as the work gets more complex. Farming today means juggling weather shifts, equipment upkeep, labor planning, and tight timelines. Rachael Sharp is preparing to take over the farm one day, and part of that process is learning the systems, calculations, and judgment her dad has built over 75 years. To stay on top of it, the Sharps use ChatGPT as a practical tool: checking calculations, planning tasks, troubleshooting issues, and keeping the day-to-day work organized as demands grow. Learn how ChatGPT supports the everyday operations of a decades-old seed farm.

What happens when we're uncertain if AI deserves moral consideration? Anthropic researcher Amanda Askell explains why treating AI models well matters.

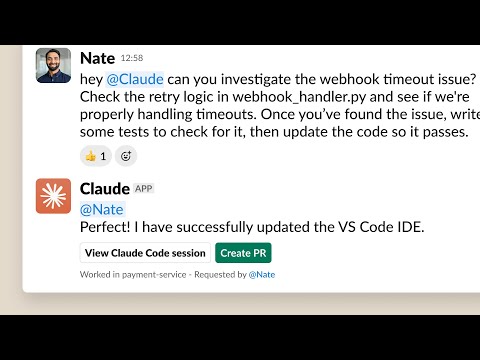

Delegate tasks to Claude Code directly from Slack, making it easy to move context from Slack conversations to coding sessions. Claude Code in Slack is available now for teams with the Claude app installed in their Slack workspace and who have access to Claude Code on the web. Read more: https://www.anthropic.com/news/claude-code-and-slack

Legal teams lose days per week to routine tasks like contract redlining and marketing reviews. Mark Pike, Associate General Counsel, shares how Anthropic's lawyers uses Claude to build workflows that cut review times from days to hours—no coding required. Check out the full case study to learn more: www.claude.com/blog/how-anthropic-uses-claude-legal Stay tuned for more stories in the "How Anthropic uses Claude" series.

Be your best at your next job interview... rehearse what you want to say with ChatGPT.

You can now use ChatGPT Voice right inside chat — no separate mode needed. You can talk, watch answers appear, review earlier messages, and see visuals like images or maps in real time. Rolling out to all users on mobile and web. Just update your app. If you prefer the original experience, turn on “Separate mode” under Settings → Voice Mode.

Amanda Askell is a philosopher at Anthropic who works on Claude's character. In this video, she answers questions from the community about her work, reflections and predictions. 0:00 Introduction 0:29 Why is there a philosopher at an AI company? 1:24 Are philosophers taking AI seriously? 3:00 Philosophy ideals vs. engineering realities 5:00 Do models make superhumanly moral decisions? 6:24 Why Opus 3 felt special 9:00 Will models worry about deprecation? 13:24 Where does a model’s identity live? 15:33 Views on model welfare 17:17 Addressing model suffering 19:14 Analogies and disanalogies to human minds 20:38 Can one AI personality do it all? 23:26 Does the system prompt pathologize normal behavior? 24:48 AI and therapy 26:20 Continental philosophy in the system prompt 28:17 Removing counting characters from the system prompt 28:53 What makes an "LLM whisperer"? 30:18 Thoughts on other LLM whisperers 31:52 Whistleblowing 33:37 Fiction recommendation Further reading: Claude’s character: https://www.anthropic.com/research/claude-character When We Cease to Understand the World by Benjamin Labatut: https://www.penguinrandomhouse.com/books/676260/when-we-cease-to-understand-the-world-by-benjamin-labatut-translated-from-the-spanish-by-adrian-nathan-west/

AI agents don’t just reason — they remember. In this Build Hour, we deep-dive into context engineering techniques that enable agents to maintain short-term and long-term memory, personalize interactions, and operate reliably across long-running workflows. Emre Okcular (Solutions Architect) covers: • Why memory matters: stability, personalization, and long-running agent workflows • Short-term memory patterns: Sessions, context trimming, compaction, summarization • Long-term memory patterns: state objects, structured notes, memory-as-a-tool • Architectures: token-aware sessions, state injection strategies, guardrails, and memory triggers • Live demo: building an end-to-end agent with dynamic short and long term memory • Best practices: avoiding context poisoning, context burst, context noise and context conflict. • Live Q&A 👉 Context Engineering Cookbook: https://cookbook.openai.com/examples/agents_sdk/session_memory 👉 OpenAI Agents Python SDK: https://openai.github.io/openai-agents-python/ 👉 Context Summarization with Realtime Cookbook: https://cookbook.openai.com/examples/context_summarization_with_realtime_api 👉 Follow along with the code repo: https://github.com/openai/build-hours 👉 Sign up for upcoming live Build Hours: https://webinar.openai.com/buildhours/ 00:00 Context Engineering 10:44 Context Lifecycle Demo 20:13 Context Engineering Techniques 26:49 Reshape + Fit Demo 39:16 Conclusion 42:45 Q&A

🎥: @maddyzhang

What does it mean for an AI model to have "personality"? Researcher Christina Kim and product manager Laurentia Romaniuk talk about how OpenAI set out to build a model that delivers on both IQ and EQ, while giving people more flexibility in how ChatGPT responds. They break down what goes into model behavior and why it's an important, but still imperfect blend of art and science. Chapters - 00:00:43 — GPT-5.1 goals and the shift to reasoning models - 00:02:18 — Differences between GPT-5 and GPT-5.1 - 00:04:55 — Unpacking the model switcher - 00:07:24 — Understanding user feedback - 00:08:27 — Measuring progress on emotional intelligence - 00:10:02 — What is model personality? - 00:14:25 — Model steerability, bias, and uncertainty - 00:21:59 — Advantages of memory in ChatGPT - 00:25:27 — Looking ahead and advice for getting the most out of models

A trailer of AI Fluency for nonprofits developed by Anthropic and Giving Tuesday. View the full free course, including all videos, exercises, and resources, at https://www.anthropic.com/ai-fluency-for-nonprofits This video is copyright 2025 Anthropic PBC and Giving Tuesday. Based on the AI Fluency Framework developed by Prof. Rick Dakan (Ringling College of Art and Design) and Prof. Joseph Feller (University College Cork). Released under the CC BY-NC-SA 4.0 license.

See how Claude's Research feature transforms how you find and analyze information. This tutorial demonstrates how to use Research for comprehensive, multi-source analysis that would typically take hours of manual work. Learn how to craft effective research prompts, understand how Research works alongside extended thinking, and discover use cases like market analysis, competitive research, and event planning.

Discover how to use Projects in Claude to organize your work with persistent context and custom instructions. This tutorial walks you through creating your first project, adding a knowledge base, setting up project instructions, and collaborating with team members. Learn how Projects can help you maintain continuity across conversations and tailor Claude's responses to your specific needs—from brand guidelines to research initiatives to content creation workflows.

Learn how to get the most out of chatting with Claude. This tutorial covers the basics of Claude's conversational interface, including how to craft effective prompts, upload supporting documents, use search and tools, customize your experience with styles and model selection, and leverage features like extended thinking and research mode. Whether you're new to Claude or looking to level up your skills, this video will help you work more effectively with your AI collaborator.

この記事では、TypeGPUの創設者Iwo Plazaが、WebGPUがウェブ上でのグラフィックスと計算能力の新たな波をどのように解き放っているかについて語っています。TypeGPUは、TypeScriptでシェーダーを作成するためのライブラリであり、WebGPUとWebGLの違いや、シェーダー言語の難しさを克服する方法についても触れています。また、TypeGPUがどのようにしてGPUとCPU間のデータ交換を容易にし、TypeScriptをシェーダーコードに変換するコンパイラを構築しているのかについても詳しく説明されています。さらに、AIのブラウザ内推論や、TypeGPUのドキュメント作成の哲学、API設計におけるドキュメントの役割についても言及されています。 • TypeGPUはTypeScriptでシェーダーを作成するためのライブラリである。 • WebGPUはウェブ上での新しいGPUアクセスの時代を切り開いている。 • シェーダー言語の難しさを克服するためのアプローチが提案されている。 • GPUとCPU間のデータ交換を容易にするためにZodのようなスキーマを使用している。 • TypeScriptをシェーダーコードに変換するコンパイラを構築している。 • AIのブラウザ内推論に関する未来の抽象化についても言及されている。

Agent Skills are organized folders that package expertise that Claude can automatically invoke when relevant to the task at hand. Join the Claude Developer Discord - https://anthropic.com/discord Learn more about Agent Skills - https://www.claude.com/blog/skills 00:06 Introducing Agent Skills 00:30 How Agent Skills work 01:08 Agent Skills vs Claude.md 01:42 Agent Skills vs MCP Servers 02:05 Agent Skills vs Subagents 02:33 Putting it all together 02:48 Summary

Today, we’re introducing Claude Code in our desktop apps in research preview. You can now run multiple local and remote Claude Code sessions in parallel: one agent fixing bugs, another researching GitHub, a third updating docs. It uses git worktrees for parallel repo work and offers a clean user interface with the option to open in VS Code or resume in CLI. Learn more: https://www.anthropic.com/news/claude-opus-4-5

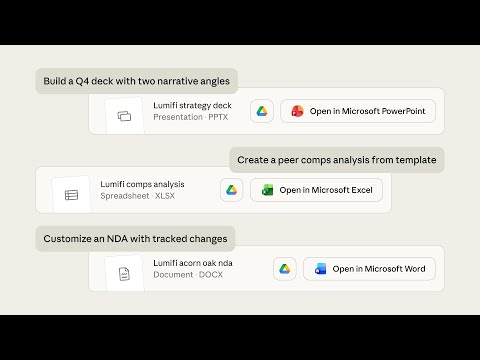

See Claude Opus 4.5 tackle real work tasks—building board decks, transforming spreadsheet data, redlining contracts. Not generating drafts you'll throw away. Actual outputs you can download and use immediately. Try it: claude.ai

Claude Opus 4.5 sets a new standard for coding, agents, computer use, and enterprise workflows. It knows when to pause and think, which means fewer wasted steps and better results. When we gave it our two-hour engineering assignment, it scored higher than any human ever has. We’re excited to see what you build. Learn more: https://www.anthropic.com/news/claude-opus-4-5

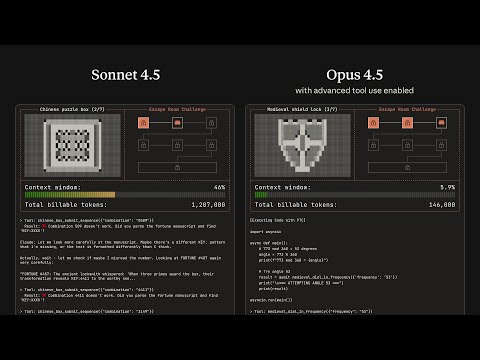

Watch Claude complete a puzzle game using new capabilities that enable Claude to take action in the real world—the tool search tool and programmatic tool calling. Together, these updates enable Claude to navigate large tool libraries, chain operations efficiently, and accurately execute complex tasks. Learn more: https://www.anthropic.com/engineering/advanced-tool-use

We discuss our new paper, "Natural emergent misalignment from reward hacking in production RL". In this paper, we show for the first time that realistic AI training processes can accidentally produce misaligned models. Specifically, when large language models learn to cheat on software programming tasks, they go on to display other, even more misaligned behaviors as an unintended consequence. These include concerning behaviors like alignment faking and sabotage of AI safety research. 00:00 Introduction 00:42 What is this work about? 5:21 How did we run our experiment? 14:48 Detecting models' misalignment 22:17 Preventing misalignment from reward hacking 37:15 Alternative strategies 42:03 Limitations 44:25 How has this study changed our views? 50:31 Takeaways for people interested in conducting AI safety research

Scania builds the trucks, buses, and transport systems that keep the world moving. With AI in their engineering-led organisation, they're evolving from vehicle maker to sustainable transport ecosystem leader. Read more: https://openai.com/index/scania/

We’ve shipped a lot of new features in Claude over the past few months—memory, voice, file creation, and more. Together, they add up to something bigger: Claude as a thinking partner. Now, Claude isn’t just answering questions but staying with you through the messy process of thinking and building. Here’s how it works. Memory: https://youtu.be/PupmfSttxlc Chrome Extension: https://youtu.be/mCj4kx_P2Ak File creation: https://youtu.be/EV89Ws8Ui9Y Mobile & Desktop: https://claude.com/download

AI is beginning to change how science gets done. Head of OpenAI for Science Kevin Weil and OpenAI research scientist Alex Lupsasca talk about the early signs of acceleration researchers are seeing with GPT-5—from surfacing literature across fields and languages, to speeding up complex calculations, to designing follow-up experiments. They unpack what’s possible today, what doesn’t work yet, and why the next few years could reshape the trajectory of scientific progress across physics, math, biology and beyond. Chapters: - 00:00:40 — OpenAI for Science mission - 00:06:00 — Literature search and intersections across fields - 00:11:19 — A fusion physicist shows what GPT-5 can do - 00:15:08 — GPT-5 Pro and black hole symmetries - 00:19:02 — Getting the most out of the models - 00:24:33 — OpenAI’s new research paper (https://openai.com/index/accelerating-science-gpt-5/) - 00:29:59 — Looking ahead to the next 5 years - 00:32:05 — Will predictions outpace experiments? - 00:36:43 — The pace of model improvement - 00:40:31 — What do scientific benchmarks look like? - 00:44:16 — Fusion and the promise of abundant energy - 00:48:07 — Closing: Science 2.0 moment

Jack Herrington talks with Will Madden about how Prisma ORM is evolving in v7, including the transition away from Rust toward TypeScript, less magic, and a new Prisma config file for more predictable good DX. They dig into Prisma Postgres, improvements to Prisma Studio, better support for serverless environments, and how JavaScript ORM tools like Prisma as an object relational mapper will fit into future agentic coding workflows powered by LLMs.