You can now access GPT 5.5 and GPT 5.5 Pro on Vercel's AI Gateway with no markup and no other provider accounts required.

You can now access Deepseek V4 on Vercel's AI Gateway with no markup and no other provider accounts required.

You can now access GPT Image 2 on Vercel's AI Gateway with no markup and no other provider accounts required.

You can now access Moonshot AI's Kimi K2.6 on Vercel's AI Gateway with no markup and no other provider accounts required.

See how Zo Computer used Vercel AI Gateway and AI SDK to cut retry rates 20x, raise chat success to 99.93%, reduce P99 latency by 38%, and add new model support in under a minute while scaling its personal AI cloud platform.

You can now access Claude Opus 4.7 on Vercel's AI Gateway with no markup and no other provider accounts required.

You can now access Seedance 2.0 Video Generation via Vercel's AI Gateway with no other provider accounts required.

The shift to agentic infrastructure. For fifty years, infrastructure assumed a human operator. Someone to configure the server, click the deploy button, or read the logs.

Enforce zero data retention across your entire team and prevent providers from training on your data. AI Gateway handles routing and provider agreements for you.

Use Anthropic's Fast Mode feature for 2.5x faster output token speeds with Opus 4.6 on AI Gateway now.

You can now access GLM 5.1 on Vercel's AI Gateway with no markup and no other provider accounts required.

Vercel AI Gateway supports Zero Data Retention and No Prompt Training controls. Enforce data policies team-wide from the dashboard or per-request, with automatic routing to compliant models from Anthropic, OpenAI, Google, and many more.

Create MCP servers directly in Nuxt apps with type-safe tools, resources, and prompts for AI integrations.

You can now access Gemma 4 models (26B and 31B) on Vercel's AI Gateway with no markup and no other provider accounts required.

You can now access Qwen 3.6 Plus from Alibaba on Vercel's AI Gateway with no markup and no other provider accounts required.

You can now access GLM 5V Turbo on Vercel's AI Gateway with no markup and no other provider accounts required.

Flora’s FAUNA creative agent turns ideas into visual workflows on a digital canvas. Built on the Vercel AI Stack (AI SDK + Workflow SDK DurableAgent + Fluid Compute) to ship faster and iterate at scale.

The Vercel plugin now supports OpenAI Codex and Codex CLI. With the plugin, teams can access over 39 platform skills, three specialist agents, and real-time code validation to assist with their development workflow.

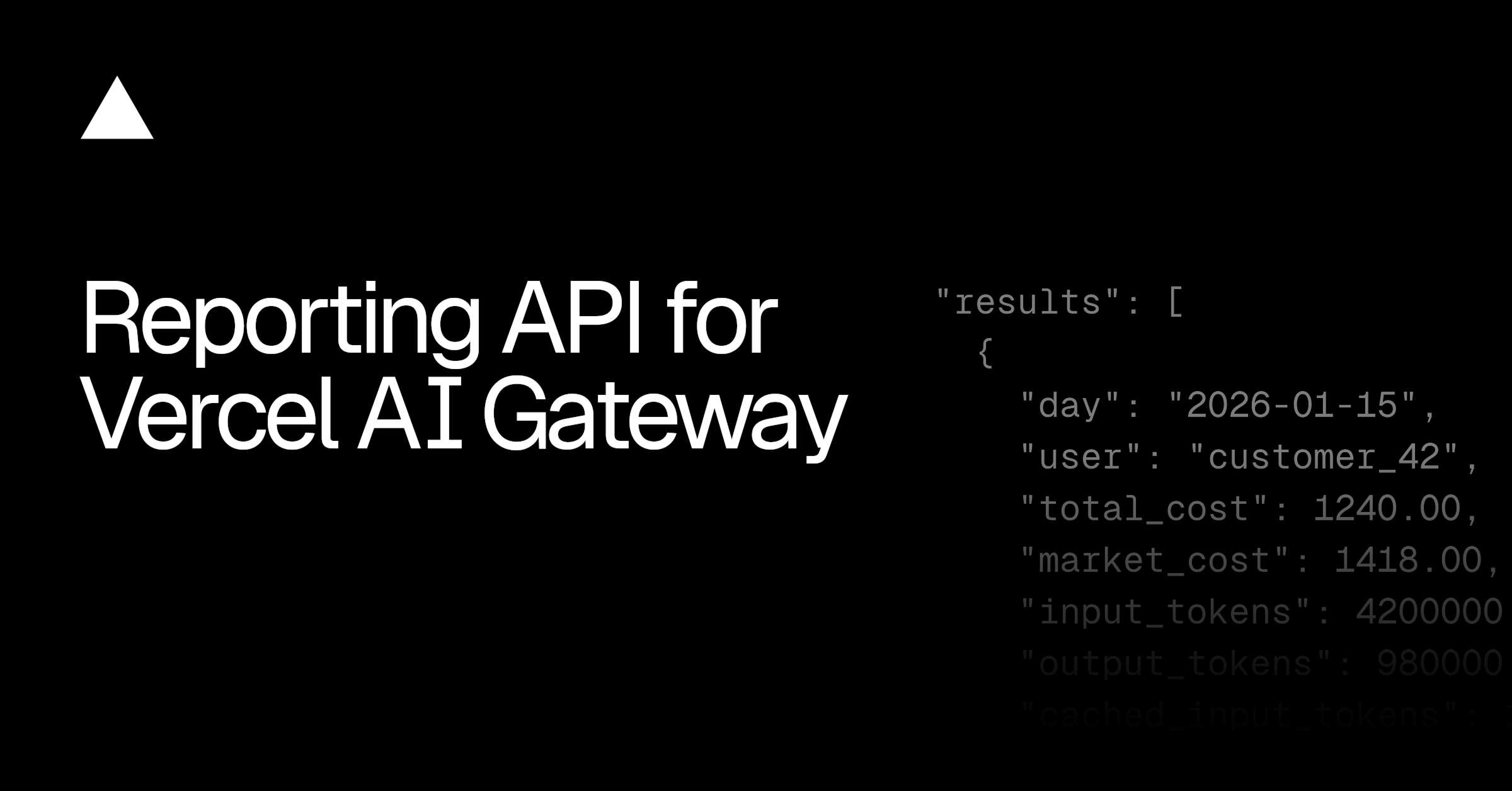

Break down AI inference costs by model, provider, user, and pricing tier. The Custom Reporting API gives you the data to calculate margins, track customer usage, and optimize spend across BYOK and system credentials.

Learn how SERHANT. scaled its AI platform S.MPLE to 900+ real estate agents using Next.js, Vercel, and AI SDK, without replatforming or locking into a single model provider.

The Vercel AI Accelerator is back with 39 early-stage teams from around the world, $6M+ in partner credits, and a Demo Day in San Francisco on April 16.

You can now access MiniMax M2.7 and M2.7 highspeed via Vercel's AI Gateway with no markup and no other provider accounts required.

Workflow DevKit on Vercel now encrypts all user data end-to-end. Workflow inputs, step arguments, return values, hook payloads, and stream data are automatically encrypted before being written to the event log.

You can now access GPT 5.4 Mini and Nano via Vercel's AI Gateway with no markup and no other provider accounts required.

Deploy LiteLLM server on Vercel to give developers OpenAI-compatible LLM access. Route models to any provider, including Vercel AI Gateway, with centralized control.

The latest release of AI Elements adds new components, an agent skill, and a round of bug fixes across the library.

Responses API is now supported on Vercel AI Gateway, with no other provider accounts required and no markup on inference cost.

AI Gateway now supports OpenAI's Responses API. AI Gateway now supports OpenAI's Responses API. You can use the openai SDK you already know, point it at AI Gateway, and route requests to models from Anthropic, Google, and OpenAI through a single...

Set per-provider timeouts on AI Gateway to trigger fast failover when a provider is slow to respond. Available for BYOK credentials.

Set per-provider timeouts on AI Gateway to trigger fast failover when a provider is slow to respond. Available for BYOK credentials.

You can now access GPT 5.4 and GPT 5.4 Pro via Vercel's AI Gateway with no markup and no other provider accounts required.

Build and deploy MCP Apps on Vercel with full Next.js support. Use the open standard for embedded UI interfaces that work across ChatGPT and other compatible hosts.

You can now access GPT 5.3 Chat (GPT 5.3 Instant) via Vercel's AI Gateway with no markup and no other provider accounts required.

You can now access the newest Gemini model, Gemini 3.1 Flash Preview via Vercel's AI Gateway with no other provider accounts required.

How Waldium made a blog platform work for humans and AI alike. Waldium started the way most content platforms do: building blogs for humans to read. But something Amrutha kept noticing was quietly changing who, and what, showed up to read them.

You can now access Google's newest model, Gemini 3.1 Flash Image Preview (Nano Banana 2) via Vercel's AI Gateway with no other provider accounts required.

For Chief Strategy and Product Officer Jayme Fishman, the path to modernizing Avalara starts with how it builds.

You can now access OpenAI's newest model GPT 5.3 Codex via Vercel's AI Gateway with no other provider accounts required.

Chat SDK is now open source and available in public beta. It's a TypeScript library for building chat bots that work across Slack, Microsoft Teams, Google Chat, Discord, GitHub, and Linear — from a single codebase.

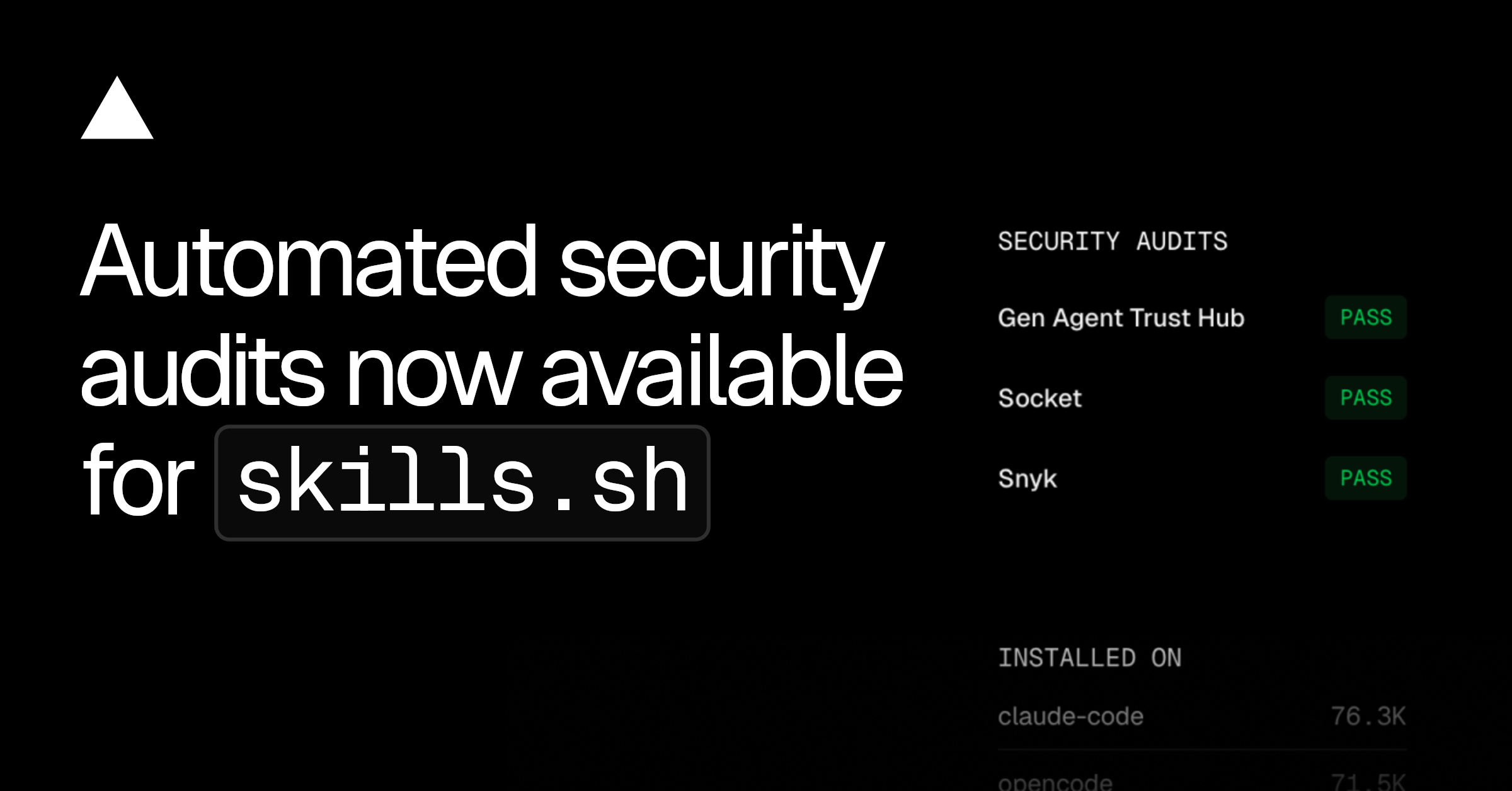

Andrew Qu reflects on Skills Night SF: how a weekend project became 69,000 community-created skills, the security partnerships protecting them, and what eight partner demos revealed about agents, context, and the future of development.

Generate photorealistic AI videos with the Veo models via Vercel AI Gateway. Text-to-video and image-to-video with native audio generation. Up to 4K resolution with fast mode options.

Generate AI videos with Kling models via the AI Gateway. Text-to-video with audio, image-to-video with first/last frame control, and motion transfer. 7 models including v3.0, v2.6, and turbo variants.

Generate stylized AI videos with Alibaba Wan models via the Vercel AI Gateway. Text-to-video, image-to-video, and unique style transfer (R2V) to transform existing footage into anime, watercolor, and more.

Generate AI videos with xAI Grok Imagine via the AI SDK. Text-to-video, image-to-video, and video editing with natural audio and dialogue.

Build video generation into your apps with AI Gateway. Create product videos, dynamic content, and marketing assets at scale.

Streamdown 2.3 focuses on design polish and developer experience. Tables, code blocks, and Mermaid diagrams have been redesigned.

Vercel now supports programmatic access to billing usage and cost data through the API and CLI, and we're introducing a new native integration that connects Vercel teams to Vantage accounts.

Vercel Blob now supports private storage for user content or sensitive files. Private blobs require authentication for all read operations, ensuring your data cannot be accessed via public URLs and is only available to authenticated requests.

You can now access Google's newest model, Gemini 3.1 Pro Preview via Vercel's AI Gateway with no other provider accounts required.

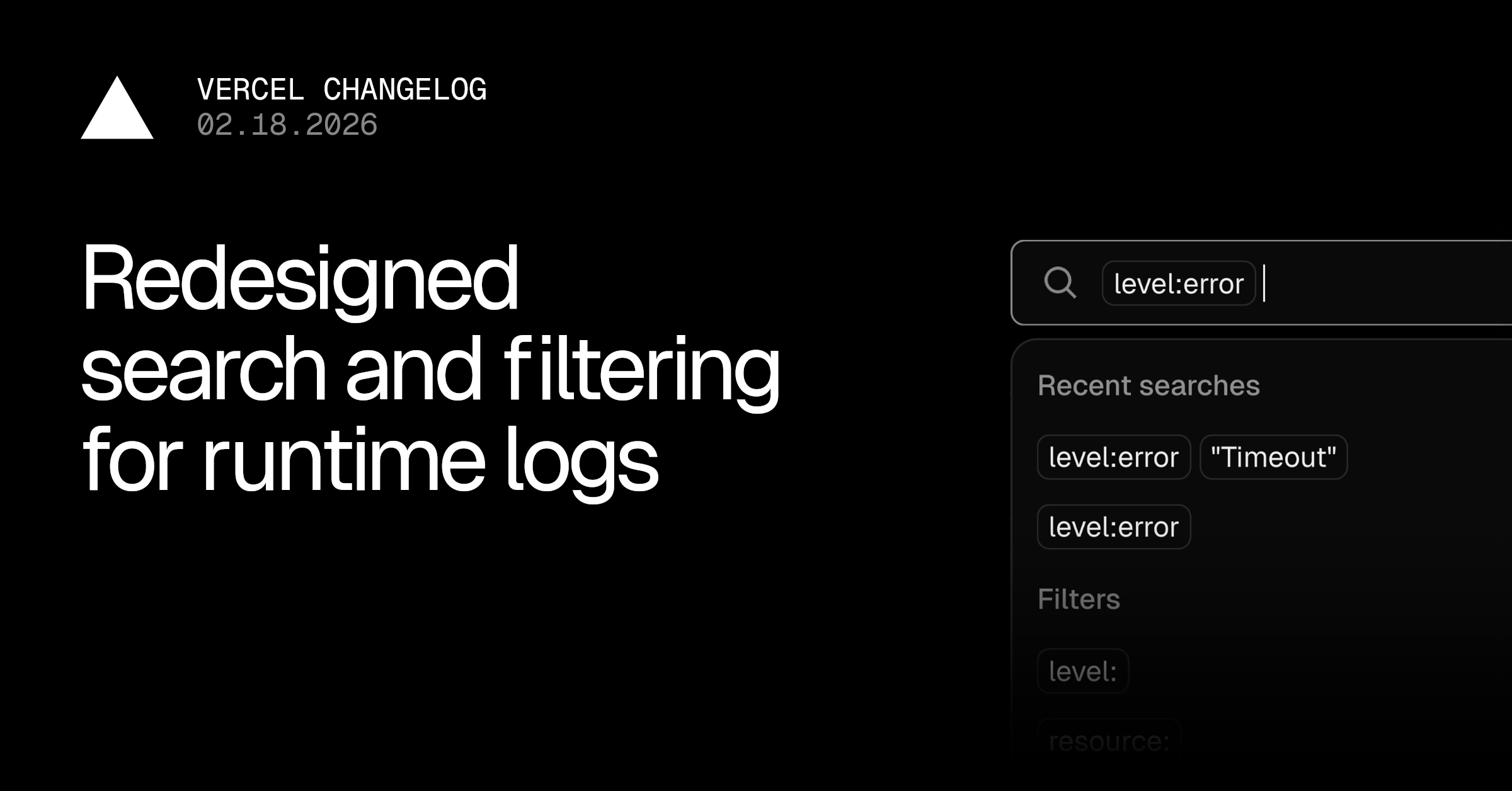

The Logs search bar has been redesigned with visual filter pills, smarter suggestions from your log data, and instant query validation.

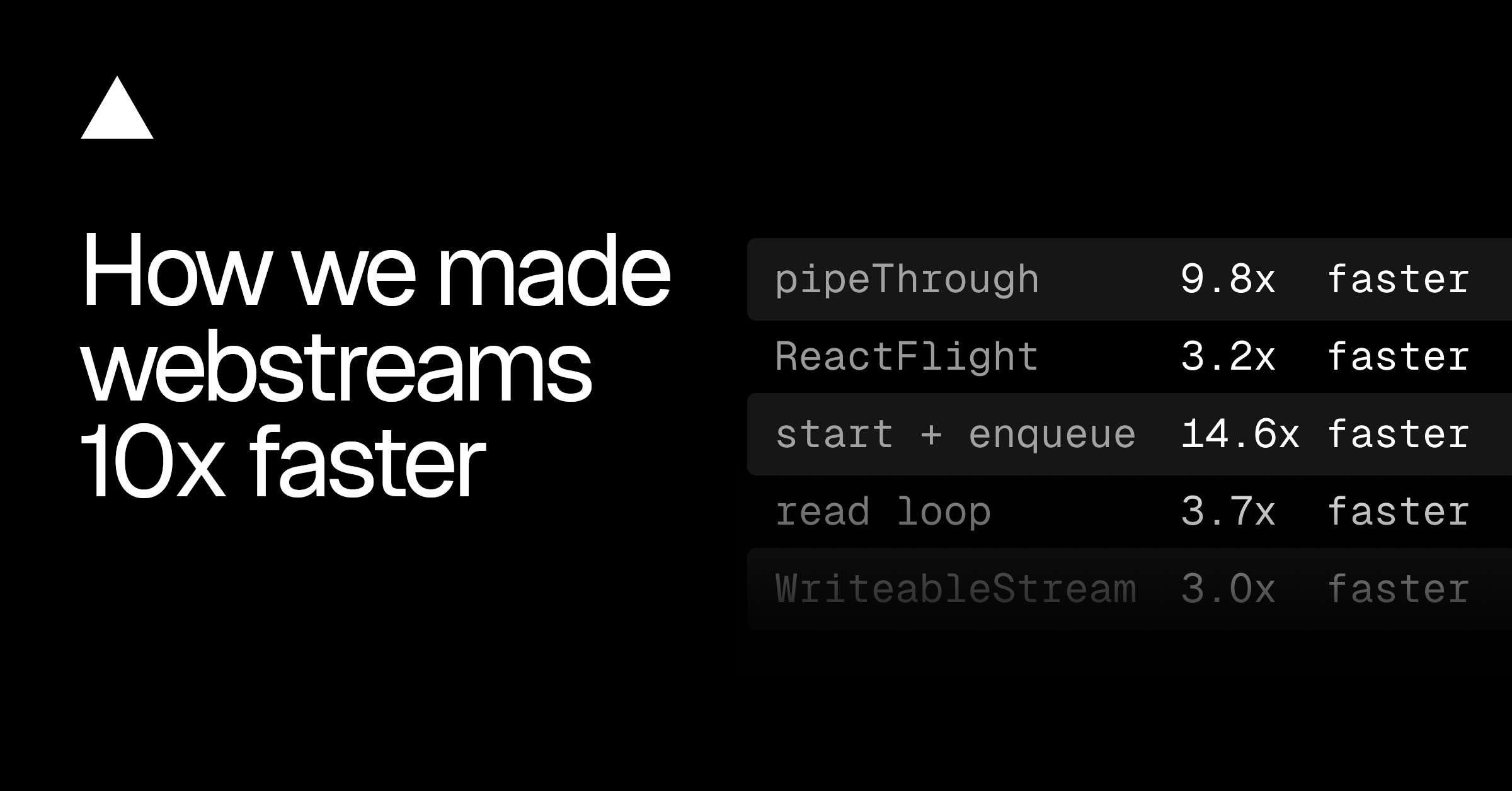

WebStreams had too much overhead on the server. We built a faster implementation. See how we achieved 10-14x gains in Next.js rendering benchmarks.

How Stably, a 6-person team, ships AI testing agents faster with Vercel, moving from weeks to hours. Their shift highlights how Vercel's platform eliminates infrastructure anxiety, boosting autonomous testing and enabling quick enterprise growth.

Skills on the skills.sh now have automated security audits to help developers to use skills with confidence.

Get automatic code fix suggestions from Vercel Agent when builds fail—directly in GitHub Pull Request reviews or the Vercel Dashboard, based on your code and build logs.

You can now access Anthropic's newest model Claude Sonnet 4.6 via Vercel's AI Gateway with no other provider accounts required.

Snapshots created with Vercel Sandbox can now have a configurable expiration policy set instead of the default 30 days allowing users to keep snapshots indefinitely.

You can now access the Recraft V4 image model via Vercel's AI Gateway with no other provider accounts required.

Runtime Logs exports now stream directly to your browser, export actual requests instead of raw rows, and match exactly what's on your screen or search.

You can now access Alibaba's latest model, Qwen 3.5 Plus, via Vercel's AI Gateway with no other provider accounts required.

Stale-if-error directive now supported with Cache-control header for all responses, which allows the cache serves a stale response when an error is encountered instead of returning a hard error to the client

Any new deployment containing a version of next-mdx-remote that is vulnerable to CVE-2026-0969 will now automatically fail to deploy on Vercel.

You can now access MiniMax M2.5 through Vercel's AI Gateway with no other provider accounts required.

Browserbase is now available on the Vercel Marketplace, allowing teams to run browser automation for AI agents without managing infrastructure.

You can now access Z.AI's latest model, GLM 5, via Vercel's AI Gateway with no other provider accounts required.

Create and manage feature flags in the Vercel Dashboard with targeting rules, user segments, and environment controls. Works seamlessly with the Flags SDK for Next.js and Svelte.

Vercel sandboxes now allow you to restrict access to the internet to keep exfiltration risks to a minimum

The vercel logs CLI command has been improved to enable more powerful querying capabilities, and optimized for use by agents.

Vercel now supports Sign in with Apple, enabling faster access for users with Apple accounts and devices

PostHog now integrates directly with Vercel to help teams manage feature rollouts and run experiments without redeploying code. This integration makes it easier for Vercel users to:

Runtime logs are now available via Vercel's MCP server, enabling AI agents to analyze performance, fix errors, and more.

Learn how we built an AI Engine Optimization system to track coding agents using Vercel Sandbox, AI Gateway, and Workflows for isolated execution.

Vercel automatically detects and revokes exposed credentials. Learn about new token formats, new automated secret scanning, and partnership in GitHub's secret scanning program.

Why competitive advantage in AI comes from the platform you deploy agents on, not the agents themselves.

Easier file downloads from the Vercel sandbox with new download and read methods added to the sandbox sdk

Sanity is now available as a content management system integration on the Vercel Marketplace. Install and configure directly from your Vercel dashboard.

Geist Pixel is a bitmap-inspired typeface built on the same foundations as Geist and Geist Mono, reinterpreted through a strict pixel grid. It’s precise, intentional, and unapologetically digital.

A six-week program to help you scale your AI company offering over $6M in credits from Vercel, v0, AWS, and leading AI platforms

You can now access Anthropic's latest model, Claude Opus 4.6, via Vercel's AI Gateway with no other provider accounts required.

How Stably, a 6-person team, ships AI testing agents faster with Vercel, moving from weeks to hours. Their shift highlights how Vercel's platform eliminates infrastructure anxiety, boosting autonomous testing and enabling quick enterprise growth.

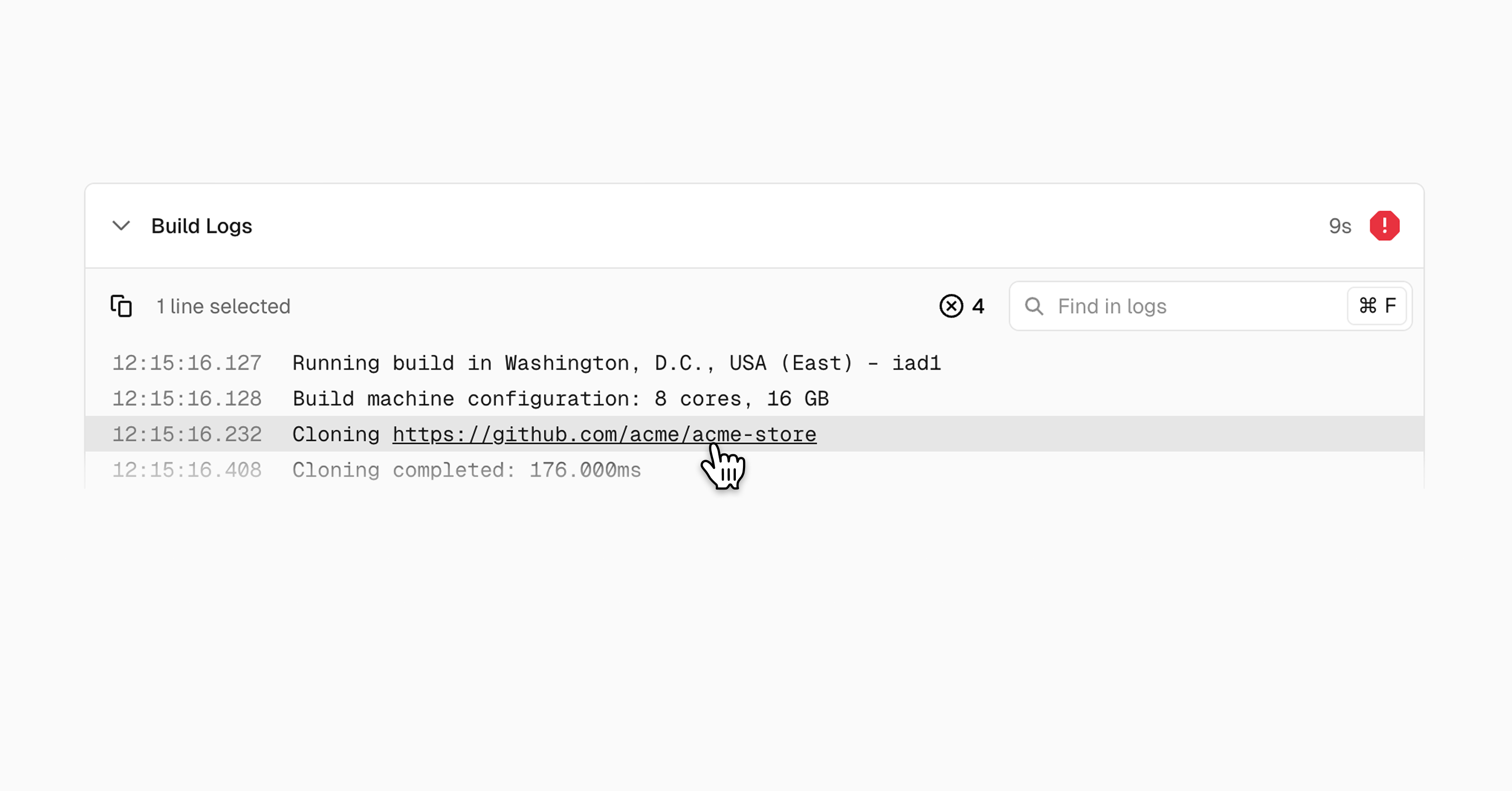

Click URLs directly in build logs to navigate to internal and external resources instantly. No more manual copying and pasting required.

Parallel is now available on the Vercel Marketplace with a native integration for Vercel projects. Developers can add Parallel’s web tools and AI agents to their apps in minutes, including Search, Extract, Tasks, FindAll, and Monitoring

Parallel's Web Search and other tools are now available on Vercel AI Gateway, AI SDK, and Marketplace. Add web search to any model with domain filtering, date constraints, and agentic mode support.

Vercel now detects and deploys Koa, an expressive HTTP middleware framework to make web applications and APIs more enjoyable to write.

Workflow 4.1 Beta now stores every state change as an event and reconstructs workflow state by replaying them, so failures can be recovered.

Copy visual context from Vercel Toolbar comments directly to agents. Streamlines feedback-to-code workflow with deployment details and component data.

Turbo build machines are now the default for new Pro Vercel projects, delivering faster buil ds with 30 vCPUs/60GB memory—up to 3x faster for long builds.

TRAE now integrates with Vercel for one-click deployments and access to hundreds of AI models through a single API key. Available in both SOLO and IDE modes.

Learn how Vercel uses HTTP content negotiation to serve markdown to agents and HTML to humans from the same URL, reducing response sizes by 90% while keeping both versions synchronized.

Vercel is opening its open source software bug bounty program to the public for researchers find vulnerabilities and make OSS safer

The new v0 brings production-ready AI coding to enterprises with git workflows, security, and real integrations. Ship faster with agents and teams.

Python versions 3.13 and 3.14 are now available for use in Vercel Builds and Vercel Functions. Python 3.14 is the new default version for new projects and deployments.

AI agents need secure, isolated environments that spin up instantly. Vercel Sandbox is now generally available with filesystem snapshots, container support, and production reliability.

Cubic joins the Vercel Agents Marketplace, offering teams an AI code reviewer with full codebase context, unified billing, and automated fixes.

Vercel Sandbox is now GA, providing isolated Linux VMs for safely executing untrusted code. Run AI agent output, user uploads, and third-party code without exposing production systems.

AssistLoop is now available in the Vercel Marketplace, making it easy to add AI-powered customer support to Next.js apps deployed on Vercel.

Stripe built a production GTM value calculator in a single flight using v0, boosting adoption 288% and cutting value analysis time by 80%.

Sensay went from zero to an MVP launch in six weeks for Web Summit. With Vercel preview deployments, feature flags, and rollbacks, the team shipped fast without a DevOps team.

A compressed 8KB docs index in AGENTS.md achieved 100% on Next.js 16 API evals. Skills maxed at 79%. Here's what we learned and how to set it up.

You can now access Arcee AI's Trinity Large Preview model via AI Gateway with no other provider accounts required.

You can now access Qwen 3 Max Thinking via Vercel's AI Gateway with no other provider accounts required.

You can now access Moonshot AI's Kimi K2.5 model via Vercel's AI Gateway with no other provider accounts required.

A plainspoken Skills FAQ with a ready-to-use guide: what skill packages are, how agents load them, what skills-ai.dev is, how Skills compare to MCP, plus security and alternatives.

You can use your Claude Code Max subscription through Vercel's AI Gateway. This lets you leverage your existing subscription while gaining centralized observability, usage tracking, and monitoring capabilities for all your Claude Code requests.

You can now view live model performance metrics for latency and throughput on Vercel AI Gateway on the website and via REST API.

You can use Vercel AI Gateway with Clawdbot and access hundreds of models with no additional API keys required.

We tested bash vs SQL agents on structured data queries. SQL dominated, but combining both tools achieved 100% accuracy. Try the open-source eval harness.

Skills support is now available in bash-tool, so your AI SDK agents can use the skills pattern with filesystem context, Bash execution, and sandboxed runtime access.

A brand new set of components designed to help you build the next generation of IDEs, coding apps and background agents.

Introducing skills, a CLI for installing and managing agent “skill packages.” Add a skill package with npx skills add <package>, with more commands planned.

You can now access image models from Recraft in Vercel AI Gateway with no other provider accounts required.

Users can opt-in to an experimental build mode for backend frameworks like Hono or Express. The new behavior allows logs to be filtered by route, similar to Next.js and other frameworks. It also improves the build process in several ways

SSH into running Sandboxes using the Vercel Sandbox CLI. Open secure, interactive shell sessions, with timeouts automatically extended in 5-minute increments for up to 5 hours.

v0 can now provision AWS databases as it builds your app. Aurora PostgreSQL, DynamoDB, and Aurora DSQL available in the Vercel Marketplace.

Use the OpenResponses API on Vercel AI Gateway with no other API keys required and support for multiple providers.

We've shipped optimizations that reduce build overhead, particularly for projects with many input files, large node_modules, or extensive build outputs.

You can use Perplexity web search with any model and any provider on Vercel AI Gateway to access real-time results and up-to-date information.

Node.js runtime in Vercel Sandbox now defaults to Node.js 24 for newer features and performance improvements.

Vercel AI Gateway now supports Perplexity Web Search as a model-agnostic tool that works for all models and providers. Use the tool to get access to the most recent information to supplement your AI queries.

We've encapsulated 10+ years of React and Next.js optimization knowledge into react-best-practices, a structured repository optimized for AI agents and LLMs.

Today we're releasing a brand new set of components for AI Elements designed to work with the Transcription and Speech functions of the AI SDK, helping you build voice agents.

You can now access the GPT 5.2 Codex model on Vercel's AI Gateway with no other provider accounts required.

Nick Bogaty joins Vercel as first Chief Revenue Officer from Adobe, bringing more than 20 years of enterprise GTM experience to lead an AI-forward sales organization.

Learn how Mux built durable AI video workflows into their @mux/ai SDK using Workflow DevKit, with automatic retries, state persistence, and zero infrastructure setup.

How to build agents with filesystems and bash, production agents, context management, template, bash

v0’s composite AI pipeline boosts reliability by fixing errors in real time. Learn how dynamic system prompts, LLM Suspense, and autofixers work together to deliver stable, working web app generations at scale.

Use Vercel AI Gateway from Claude Code via the Anthropic-compatible endpoint, with a URL change and AI Gateway usage and cost tracking.

Internal tools often decay due to high maintenance costs and security tradeoffs. Learn how Vercel uses v0 to build secure, sustainable custom software that business teams can ship and maintain without pulling engineers off the roadmap.

How Vercel built AI-generated pixel trading cards for Next.js Conf and Ship AI, then turned the same pipeline into a v0 template and festive holiday experiment.

We spent months building a sophisticated text-to-sql agent, but as it turns out, sometimes simpler is better. Giving it the ability to execute arbitrary bash commands outperformed everything we built. We call this a file system agent.

You can now access the Z.ai GLM-4.7 model on Vercel's AI Gateway with no other provider accounts required.

Introducing agents, tool execution approval, DevTools, full MCP support, reranking, image editing, and more.

You can now access the Gemini 3 Flash model on Vercel's AI Gateway with no other provider accounts required.

Cline scales its open source coding agent with Vercel AI Gateway, delivering global performance, transparent pricing, and enterprise reliability.

A database of tutorials, videos, and best practices for building on Vercel, written by engineers from across the company.

You can now access the GPT 5.2 models on Vercel's AI Gateway with no other provider accounts required.

You can now access the GPT 5.1 Codex Max model with Vercel's AI Gateway with no other provider accounts required.

You can now access Amazon's latest model Nova 2 Lite on Vercel AI Gateway with no other provider accounts required.

You can now access Mistral's latest model, Mistral Large 3, on Vercel AI Gateway with no other provider accounts required.

You can now access the newest DeepSeek V3.2 models, V3.2 and V3.2 Speciale in Vercel AI Gateway with no other provider accounts required.

You can now access the newest Arcee AI model Trinity Mini in Vercel AI Gateway with no other provider accounts required.

You can now access Prime Intellect AI's Intellect-3 model in Vercel AI Gateway with no other provider accounts required.

You can now access the newest image model FLUX.2 Pro from Black Forest Labs in Vercel AI Gateway with no other provider accounts required.

You can now access Anthropic's latest model Claude Opus 4.5 in Vercel AI Gateway with no other provider accounts required.

At Vercel, we’re building self-driving infrastructure, a system that autonomously manages production operations, improves application code using real-world insights, and learns from the unpredictable nature of production itself.

You can now access xAI's Grok 4.1 models in Vercel AI Gateway with no other provider accounts required.

You can now access Google's latest model Nano Banana Pro (Gemini 3 Pro Image) in Vercel AI Gateway with no other provider accounts required.

You can now access Google's latest model Gemini 3 Pro in Vercel AI Gateway with no other provider accounts required.

The Gemini 3 Pro Preview model, released today, is now available through AI Gateway and in production on v0.app.

You can now access the two GPT 5.1 Codex models with Vercel's AI Gateway with no other provider accounts required.

You can now access the two GPT 5.1 models with Vercel's AI Gateway with no other provider accounts required.

Model fallbacks now supported in Vercel AI Gateway in addition to provider routing, giving you failover options when models fail or are unavailable.

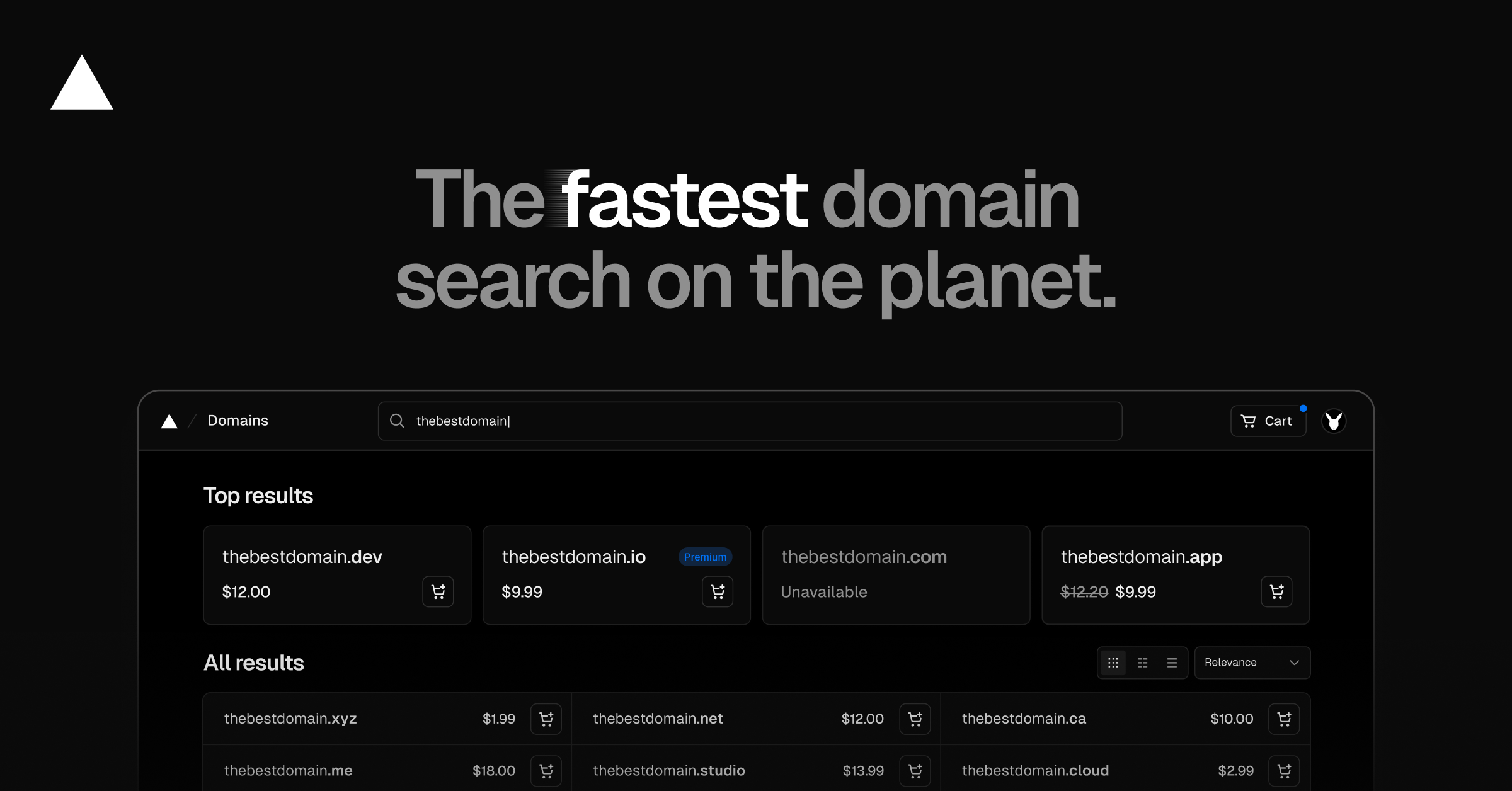

You can now search for domains on Vercel using AI. Results are fast and creative, and you have full control via the search bar.

Vercel BotID Deep Analysis protected Nous Research by blocking advanced automated abuse from attacking their application

A low severity vulnerability of input validation bypass on Vercel AI SDK has been mitigated and fixed

You can now access Moonshot AI's Kimi K2 Thinking and Kimi K2 Thinking Turbo with Vercel's AI Gateway with no other provider accounts required.

The AI Gateway is a simple application deployed on Vercel, but it achieves scale, efficiency, and resilience by running on Fluid compute and leveraging Vercel’s global infrastructure.

Improved observability of redirects and rewrites are now generally available under the Observability tab on Vercel's Dashboard. Redirect and rewrite request logs are now drainable to any configured Drain

Microfrontends now generally available, enabling you to split large applications into smaller units that render as one cohesive experience for users.

Vercel now detects and deploys Fastify, a fast and low overhead web framework, with zero configuration.

BotID Deep Analysis is a sophisticated, invisible bot detection product. This article is about how BotID Deep Analysis adapted to a novel attack in real time, and successfully classified sessions that would have slipped through other services.

Vercel Agent Investigation intelligently conducts incident response investigations to alert, analyze, and suggest remediation steps

Details on ISR cache keys, cache tags, and cache revalidation reasons are now available in Runtime Logs for all customers

You can now access OpenAI's GPT-OSS-Safeguard-20B with Vercel's AI Gateway with no other provider accounts required.

Vercel has achieved TISAX Assessment Level 2 security standard used in the automotive and manufacturing industries

Vercel has achieved TISAX Assessment Level 2 security standard to align with automotive and manufacturing industries

You can now access MiniMax M2 with Vercel's AI Gateway for free with no other provider accounts required.

The Bun runtime is now available in Public Beta for Vercel Functions. Benchmarks show Bun reduced average latency by 28% for CPU-bound Next.js rendering compared to Node.js.

Vercel Functions now supports the Bun runtime, giving developers faster performance options and greater flexibility for optimizing JavaScript workloads.

David Totten joins Vercel as VP of Global Field Engineering from Databricks to oversee Sales Engineering, Developer Success, Professional Services, and Customer Support Engineering under one integrated organization

Earlier this year we introduced the foundations of the AI Cloud: a platform for building intelligent systems that think, plan, and act. At Ship AI, we showed what comes next. What and how to build with the AI Cloud.

AI Chat is now live on Vercel docs. Ask questions, load docs as context for page-aware answers, and copy chats as Markdown for easy sharing.

Vercel Firewall and Observability Plus can now configure Custom Rules targeting specific server actions

Discover AI Agents & Services on the Vercel Marketplace. Integrate agentic tools, automate workflows, and build with unified billing and observability.

Vercel Agent can now automatically run AI investigations on anomalous events for faster incident response.

Build, scale, and orchestrate AI backends on Vercel. Deploy Python or Node frameworks with zero config and optimized compute for agents and workflows.

Agents and Tools are available in the Vercel Marketplace, enabling AI-powered workflows in your projects with native integrations, unified billing, and built-in observability.

Vercel AI Cloud combines unified model routing and failover, elastic cost-efficient compute that only bills for active CPU time, isolated execution for untrusted code, and workflow durability that survives restarts, deploys, and long pauses.

Braintrust joins the Vercel Marketplace with native support for the Vercel AI SDK and AI Gateway, enabling developers to monitor, evaluate, and improve AI application performance in real time.

You can now access Claude Haiku 4.5 with Vercel's AI Gateway with no other provider accounts required.

Vercel and Salesforce are partnering to help teams build, ship, and scale AI agents across the Salesforce ecosystem, starting with Slack.

Use the Apps SDK, Next.js, and mcp-handler to build and deploy ChatGPT apps on Vercel, complete with custom UI and app-specific functionality.

Vercel now uses uv, a fast Python package manager written in Rust, as the default package manager during the installation step for all Python builds.

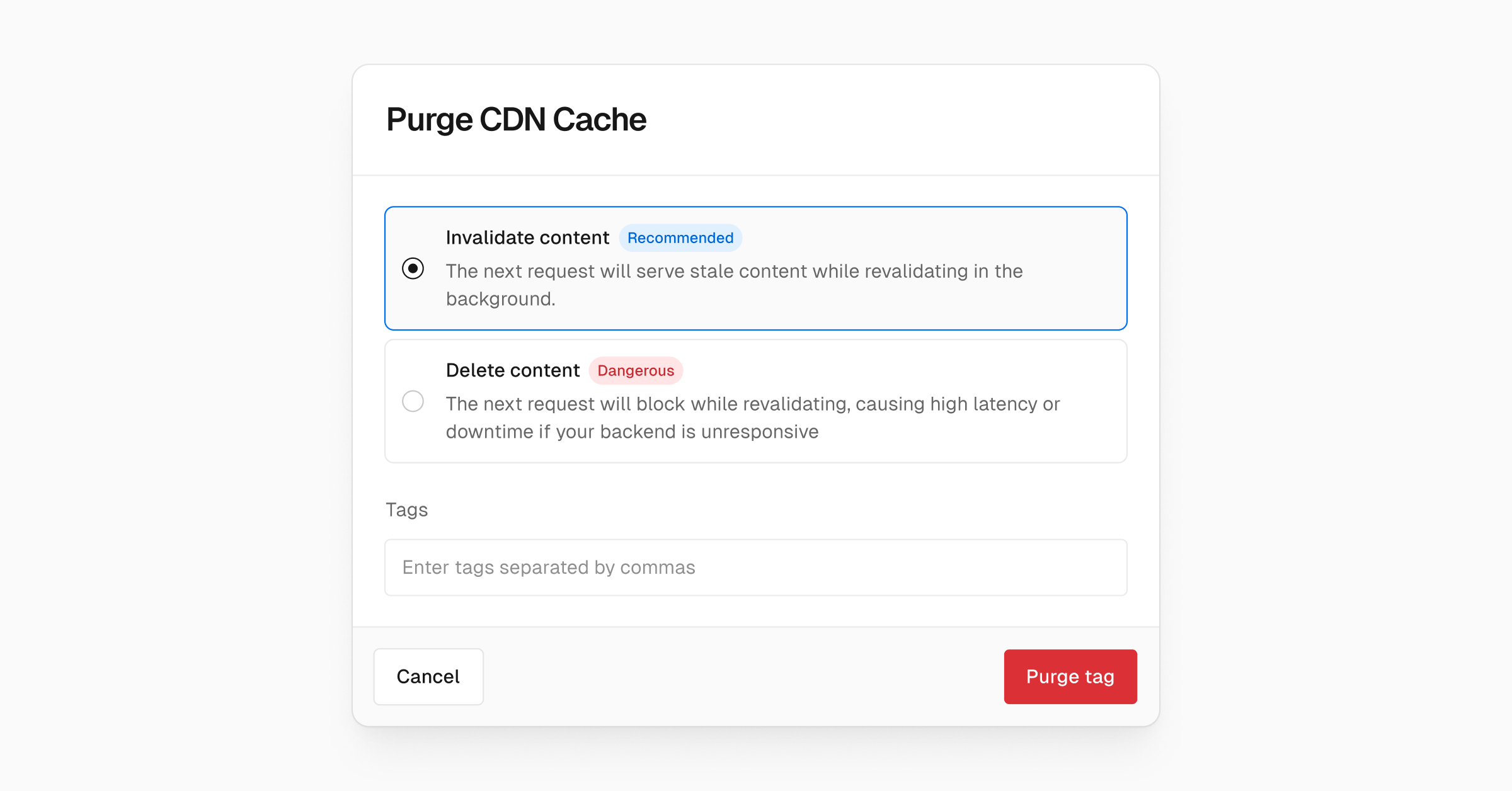

You can now invalidate the CDN cache contents by tag providing a way to revalidate content without increasing latency for your users

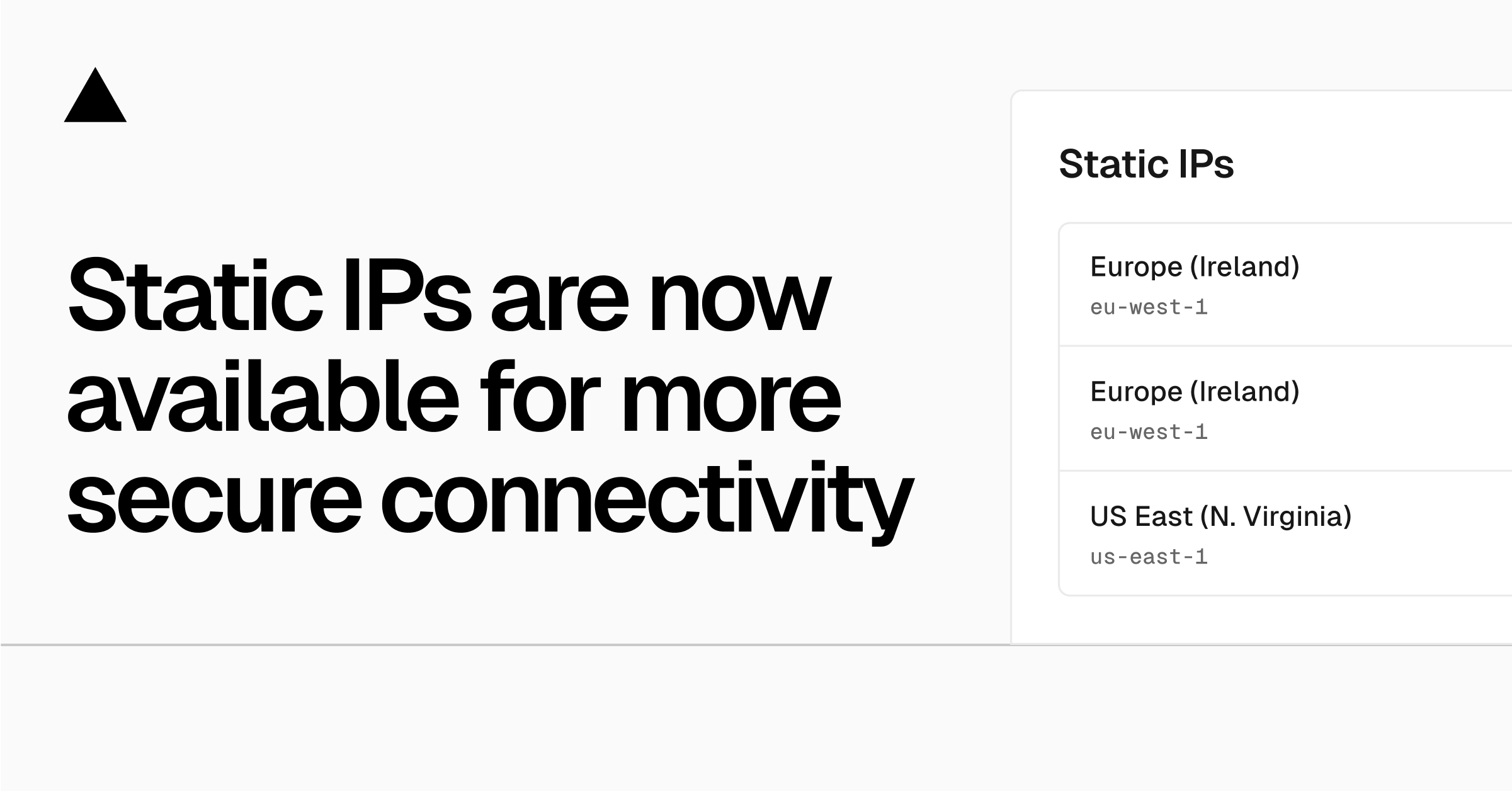

Teams on Pro and Enterprise can now access Static IPs to connect to services that require IP allowlisting. Static IPs give your projects consistent outbound IPs without needing the full networking stack of Secure Compute.

You can now enable Fluid Compute on a per-deployment basis. By setting "fluid": true in your vercel.json

Publishing v0 apps on Vercel got 1.1 seconds faster on average due to some optimizations on sending source files during deployment creation.

Today, Vercel announced an important milestone: a Series F funding round valuing our company at $9.3 billion.

stripe claimable sandbox available on vercel marketplace and v0. You can test your flow fully in test mode and go claim it when ready to go live

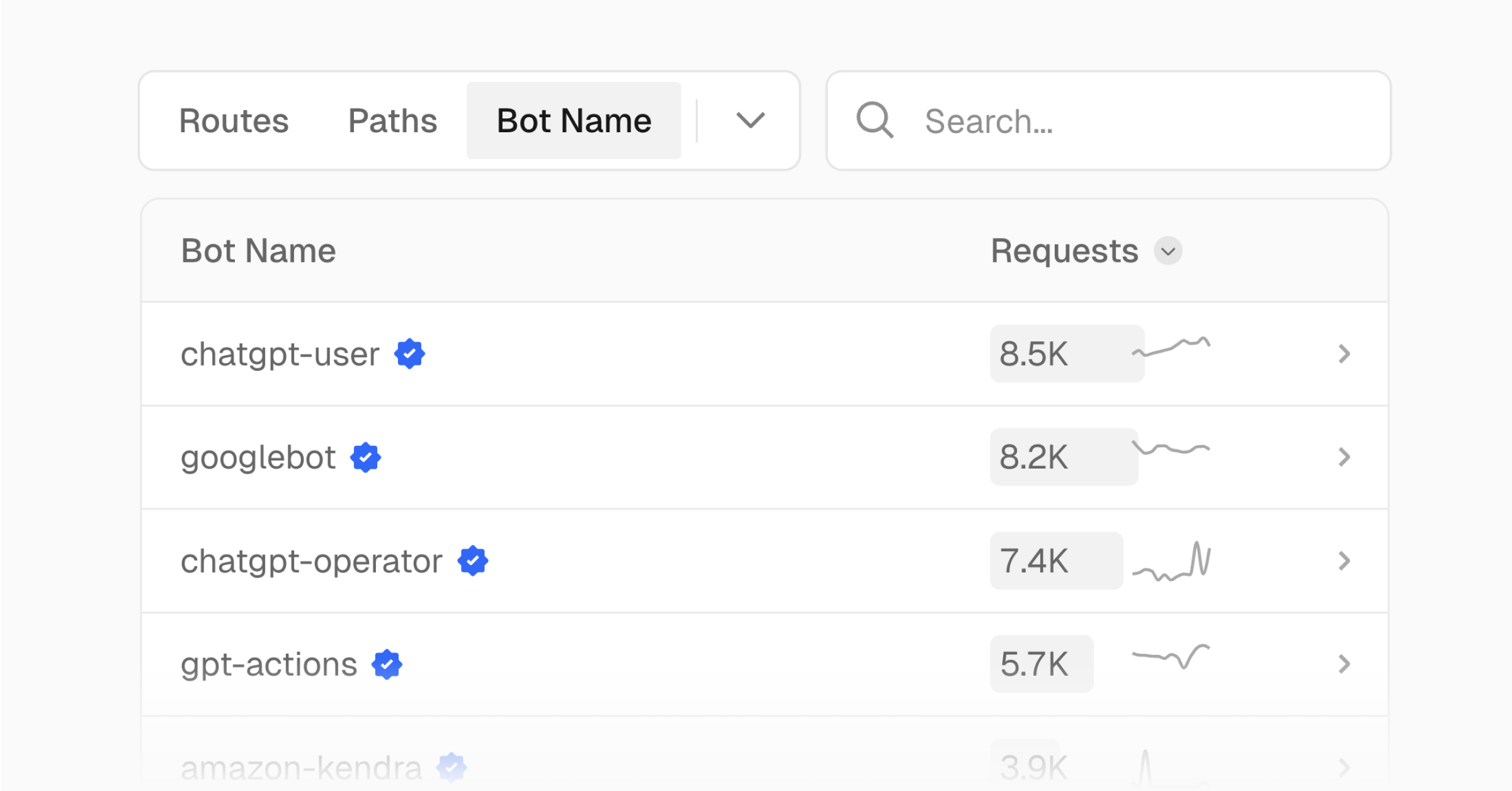

Analyze traffic to your Vercel projects by bot name, bot category, and bot verification status in Vercel Observability

Claude Sonnet 4.5 is now available on Vercel AI Gateway and across the Vercel AI Cloud. Also introducing a new coding agent platform template.

Vercel Functions using Node.js can now detect when a request is cancelled and stop execution before completion. This includes actions like navigating away, closing a tab, or hitting stop on an AI chat to terminate compute processing early.

FastAPI, a modern, high-performance, web framework for building APIs with Python based on standard Python type hints, is now supported with zero-configuration.

We rebuilt the Vercel Domains experience to make search and checkout significantly faster and more reliable.

Vercel now does regional request collapsing on cache miss for Incremental Static Regeneration (ISR).

The Vercel CDN now supports request collapsing for ISR routes. For a given path, only one function invocation per region runs at once, no matter how many concurrent requests arrive.

It's now possible to run custom queries against all external API requests that where made from Vercel Functions

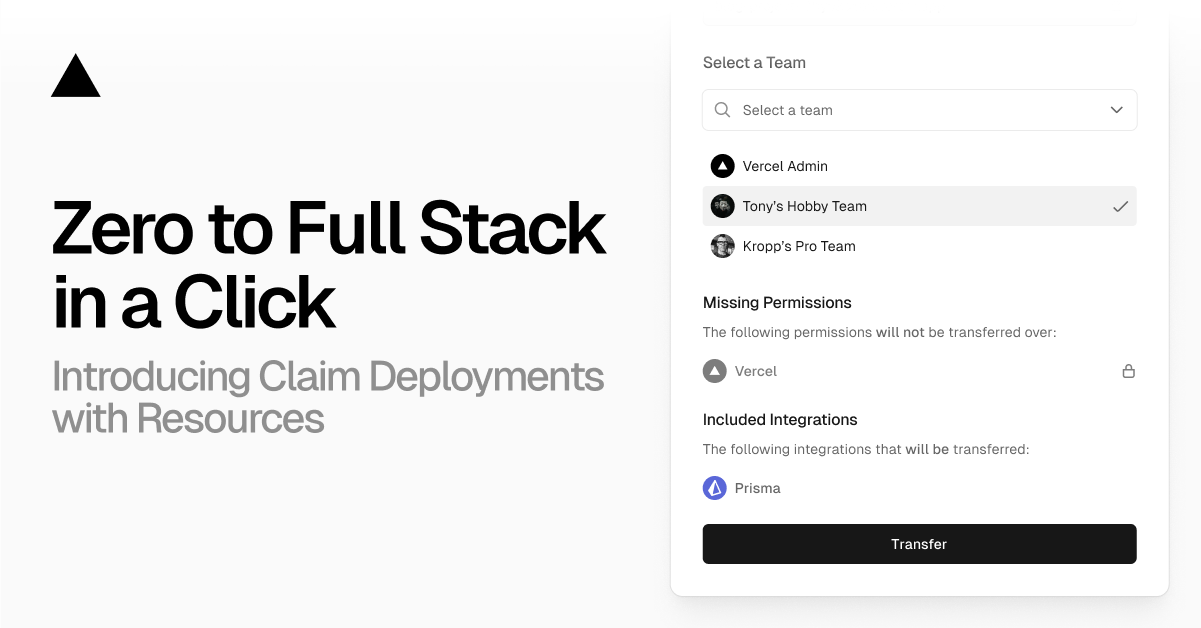

Vercel now supports transferring resources like databases between teams as part of the Claim Deployments flow. Developers building AI agents, no-code tools, and workflow apps can instantly deploy projects and resources

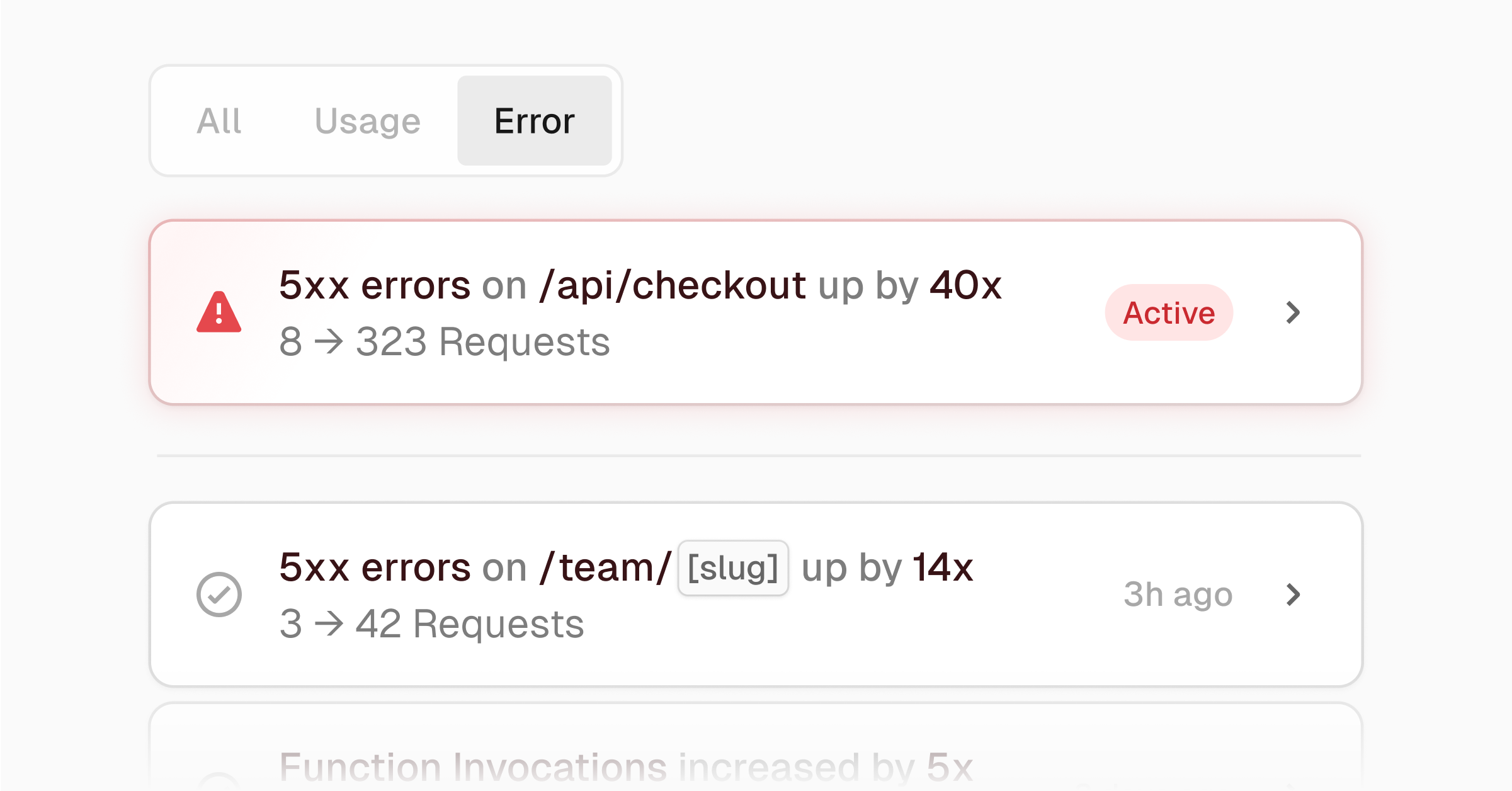

Enterprise customers with Observability Plus can now receive anomaly alerts for errors through the limited beta

A financial institution's suspicious bot traffic turned out to be Google bots crawling SEO-poisoned URLs from years ago. Here's how BotID revealed the real problem.

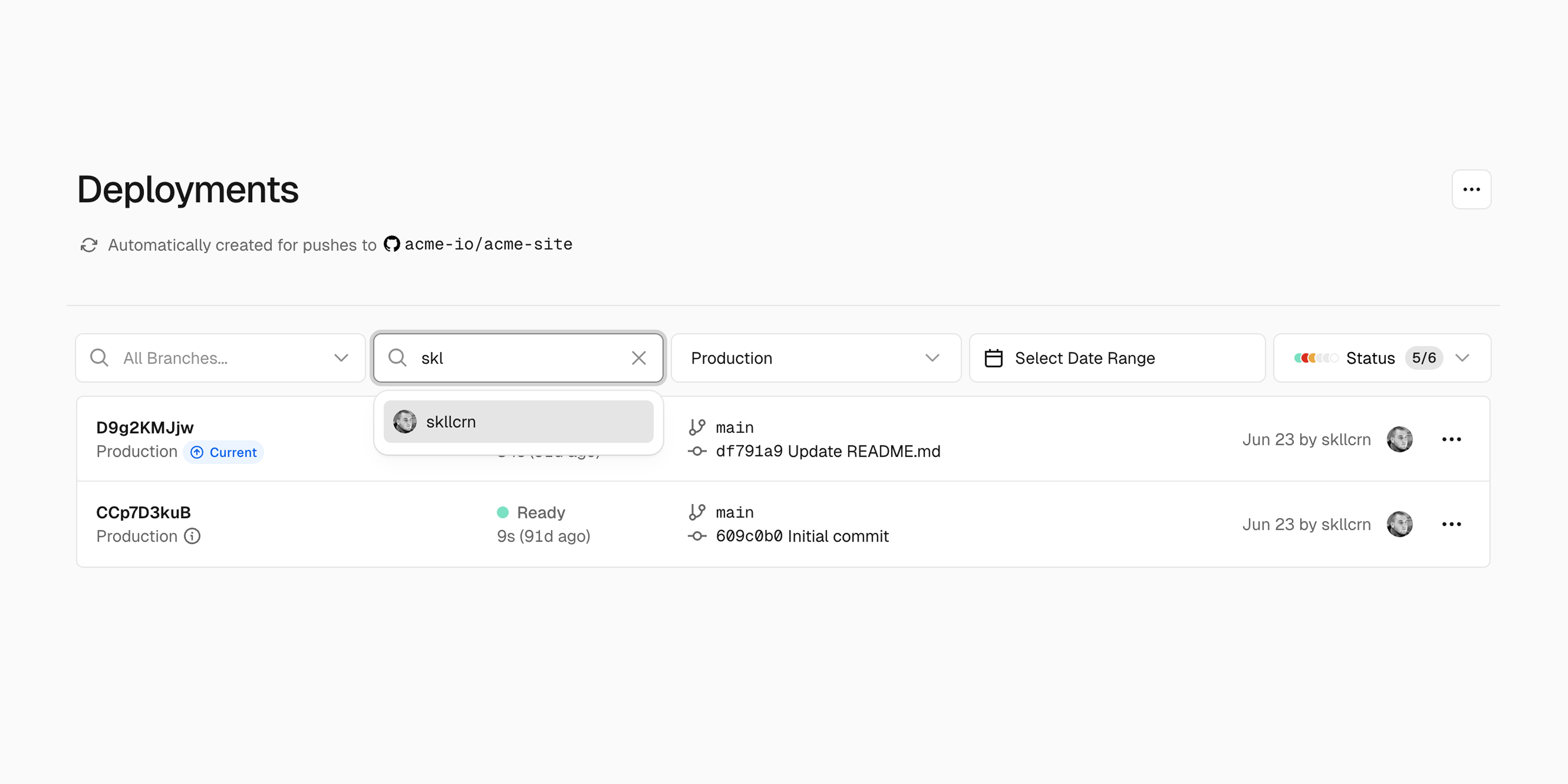

Easily filter deployments on the Vercel dashboard by the Vercel username or email, or git username (if applicable).